A/B testing best practices start with one simple idea: compare two versions of something, measure the difference, and decide with evidence instead of guesswork. In hypothesis testing, that decision is not based on instinct alone. It is based on data, probability, and a clear plan. This guide explains A/B testing best practices in a very simple way, so you can understand how experiments work, why they fail, and how to run them correctly.

What this article covers

- What hypothesis testing means

- How A/B tests are structured

- How to avoid common mistakes

Why it matters

- It reduces opinion-driven decisions.

- It improves conversion and product decisions.

- It helps teams learn faster and safer.

What Is Hypothesis Testing in A/B Testing Best Practices?

Hypothesis testing is a structured way to check whether an observed difference is likely real or just due to chance. In A/B testing, you compare version A with version B, then ask whether the data supports a meaningful change. That is why A/B testing best practices are so important. They help you set up the test properly, measure the right outcome, and avoid false conclusions.

At a basic level, hypothesis testing has two sides. The null hypothesis says there is no meaningful difference. The alternative hypothesis says there is a difference worth noticing. Your job is not to “prove” a belief blindly. Instead, your job is to collect enough evidence to see whether the null hypothesis should be rejected.

- Null hypothesis (H0): no meaningful difference exists.

- Alternative hypothesis (H1): a meaningful difference exists.

- Test statistic: a number that summarizes the experiment result.

- p-value: the chance of seeing a result this extreme if H0 were true.

For a deeper statistical foundation, our article on p-values, Type I error, and Type II error connects directly with experiment interpretation. It is a natural companion to A/B testing best practices.

Why A/B Testing Best Practices Matter

A/B tests are easy to start, but they are not always easy to trust. A poorly designed test can produce misleading results, waste traffic, and create false confidence. Therefore, good A/B testing best practices protect you from common mistakes such as biased samples, too-short experiments, and random noise.

When teams use experiments well, they learn faster and make clearer decisions. Moreover, they can separate intuition from evidence. That is especially useful in product design, marketing, pricing, landing pages, email campaigns, and checkout optimization. In each case, a small change can have a big impact, but only if the test is run correctly.

| Part of the test | What it means | Why it matters |

|---|---|---|

| Hypothesis | A clear statement about expected change | Gives the test a purpose |

| Metric | The number you track | Keeps the decision objective |

| Randomization | Users are split by chance | Reduces bias |

| Decision rule | How you judge the result | Prevents emotional decisions |

A/B Testing Best Practices: Start With a Clear Question

Every strong experiment begins with a clear question. For example, you may ask whether a shorter signup form increases conversions. Or you may want to know whether a new button color improves clicks. A vague goal leads to a vague test, and a vague test usually gives vague results. That is why A/B testing best practices begin with a specific hypothesis.

A good hypothesis should state what you are changing, why you think it will matter, and which metric will show success. For example: “Reducing the form from six fields to three will increase completed signups because it lowers friction.” This statement is simple, testable, and focused.

- Keep the question narrow.

- Choose one main metric.

- Write the expected effect in advance.

- Make the test easy to explain to others.

A strong hypothesis also helps avoid “metric shopping,” where people look at many numbers and choose only the one that looks best. Instead, the goal is to decide before the experiment begins. That habit makes A/B testing best practices more reliable and more honest.

Choose the Right Metric First

Metrics are the heart of any A/B test. If the metric is wrong, the experiment may still “win” on paper while losing in reality. For example, a new design might increase clicks but reduce purchases. As a result, you need to measure the outcome that truly reflects business value.

Most teams use one primary metric and a few guardrail metrics. The primary metric is the main success measure. Guardrail metrics make sure the change does not cause harm. For instance, if you are testing a faster checkout page, your primary metric may be completed orders, while guardrail metrics may include error rate and refund rate.

- Primary metric: the main target of the test.

- Guardrail metric: a safety check against damage.

- Leading metric: an early signal that may predict later success.

- Lagging metric: a later result, such as revenue or retention.

The best practice is to avoid too many metrics in one test. Too many measures create noise, and they make decision-making messy. That is why A/B testing best practices usually recommend one main decision metric and a small number of supporting checks.

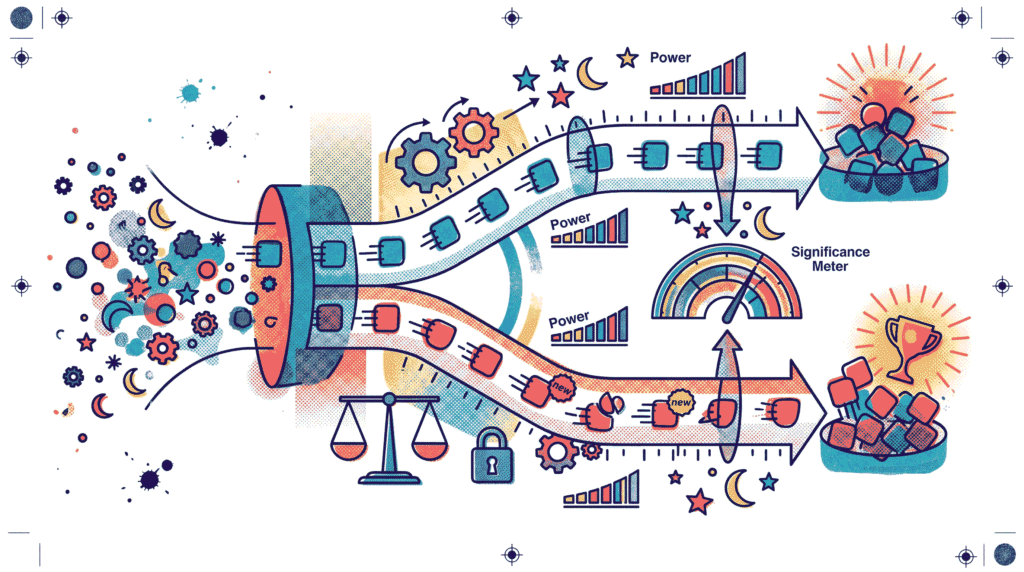

Sample Size, Power, and Duration

One of the most common mistakes in experimentation is stopping too early. A test with too few users may show a random spike or drop that disappears later. To avoid that problem, you need enough sample size and enough run time. This is where statistical power becomes important.

Power is the probability of detecting a real effect when one actually exists. A higher-powered test is more likely to spot meaningful change. However, higher power usually requires a larger sample size. Therefore, you must balance speed and confidence.

- Sample size: the number of users or observations in the test.

- Power: the chance of detecting a true effect.

- Significance level: the threshold for rejecting the null hypothesis.

- Minimum detectable effect (MDE): the smallest change worth noticing.

Duration matters too. A test should run long enough to capture normal traffic patterns, weekday behavior, and sometimes even seasonal effects. For that reason, a test may need to run for at least one full business cycle. In many cases, waiting a little longer is better than making a rushed decision.

If you want a broader statistical background, our article on the Central Limit Theorem is helpful because it explains why sample averages stabilize as sample size grows. That idea supports many A/B testing best practices.

Practical rule for duration

- Do not stop because the result “looks good.”

- Do not stop because the result “looks bad.”

- Stop when the planned sample size is reached.

- Check whether enough time passed for normal traffic behavior.

Randomization and Segmentation in A/B Testing Best Practices

Randomization means users are assigned to groups by chance. This is important because it helps balance hidden differences between the groups. Without randomization, one group might contain more mobile users, more new customers, or more heavy buyers. Then the result would be biased from the start.

Segmentation is also useful, but it must be handled carefully. You may want to compare results for mobile and desktop users, or for new and returning visitors. That can reveal useful patterns. Even so, segmenting too early or too often can create false discoveries. Therefore, good A/B testing best practices recommend using segments to understand results, not to manipulate them.

- Randomize users at the right level.

- Keep groups mutually exclusive.

- Avoid mixing one user across versions.

- Use segments as insight, not as proof by themselves.

This is also the point where Simpson’s Paradox becomes relevant. A result can look positive overall, yet reverse inside a specific subgroup. Because of that, segment review is useful, but it must be interpreted with care.

Understand p-Values Without Overcomplicating Them

The p-value often causes confusion, but the basic idea is simple. It measures how surprising your result would be if the null hypothesis were true. A small p-value suggests the observed result would be unlikely by chance alone. However, it does not tell you the size of the effect, and it does not prove that the new version is automatically better.

This is why A/B testing best practices never rely on p-values alone. A result can be statistically significant but practically unimportant. For example, a button change may increase clicks by 0.2%. That may be statistically real, but it might not justify the cost of implementation.

For a deeper explanation of this topic, our guide on p-values and statistical errors is a strong companion article. It helps make A/B testing best practices much easier to apply.

A/B Testing Best Practices for Analysis and Decision-Making

Once the test ends, analysis should follow a pre-decided rule. This is important because people often want to interpret results in the most optimistic way. A disciplined process helps prevent that bias. Start by checking whether the sample size was reached, whether the test ran long enough, and whether the primary metric moved enough to matter.

Then, examine confidence intervals if they are available. A confidence interval shows the plausible range for the true effect. It gives more context than a single point estimate. If the interval is wide, the result may still be uncertain. If it is narrow and clearly away from zero, your confidence is stronger.

- Check the pre-set sample size.

- Review the primary metric first.

- Use confidence intervals for context.

- Look at practical impact, not just statistical significance.

Good analysis is also honest analysis. If the test is inconclusive, say so. If the winning variation improves one metric but harms another, that should be visible too. In other words, A/B testing best practices are not about forcing a winner. They are about learning the truth from the data.

Tools That Support A/B Testing Best Practices

Many teams use platforms and analytics tools to run experiments, segment traffic, and read results. These tools do not replace good thinking, but they make experimentation easier to manage. Popular options include Optimizely, VWO, and Google Analytics. Each can support parts of the experimentation workflow.

For statistical understanding, a trusted source such as NIST is useful for learning formal concepts. Meanwhile, product teams often combine analytics platforms with experimentation software so they can connect test results to broader business metrics.

- Experiment platforms: run tests and assign users.

- Analytics tools: measure downstream behavior.

- Dashboards: keep stakeholders informed.

- Event tracking: records clicks, conversions, and usage.

Common Mistakes to Avoid

Even experienced teams make avoidable errors. Fortunately, most of them can be prevented with a little discipline. The main idea is to respect the experiment design from the beginning and not rewrite the rules midway.

- Stopping too early: results may be random and unstable.

- Changing the metric mid-test: this creates bias.

- Running too many tests at once: traffic gets diluted.

- Ignoring seasonality: behavior may change across days or weeks.

- Overreading small wins: tiny effects may not be worth action.

Another common issue is peeking at the results too frequently. When people check the test every few hours and stop as soon as the numbers look good, the chance of a false win rises. That is why disciplined A/B testing best practices recommend a fixed decision rule before launch.

A Simple Workflow for Hypothesis Testing in A/B Testing

A simple workflow makes experimentation easier to repeat. It also helps teams stay aligned. The following steps are practical, and they match most modern experimentation programs.

- Define the business problem clearly.

- Write one testable hypothesis.

- Select one primary metric and a few guardrails.

- Estimate sample size and run time.

- Randomize users fairly.

- Launch the test and avoid changing it midstream.

- Analyze the result using the pre-set decision rule.

- Document the outcome and the lesson learned.

This process keeps your work consistent across experiments. Over time, your team learns not just what works, but also why it works. That learning loop is one of the biggest advantages of A/B testing best practices.

A/B Testing Best Practices Checklist

- Keep one clear hypothesis.

- Use one main success metric.

- Set sample size before launch.

- Run the test long enough.

- Randomize users correctly.

- Avoid changing the test mid-run.

- Read p-values with care.

- Check confidence intervals and practical impact.

- Watch for Simpson’s paradox and segment bias.

- Document what you learned.

This checklist is simple, but it is powerful. If you follow it consistently, your tests will become easier to trust. More importantly, your decisions will become easier to defend. That is the real goal of A/B testing best practices.

Conclusion

Hypothesis testing gives A/B testing its logic. Without it, experiments become guesses with charts. With it, you get a disciplined way to compare versions, measure change, and make better decisions. The most important A/B testing best practices are simple: ask a clear question, choose the right metric, randomize fairly, wait for enough data, and interpret the result honestly.

When those habits become routine, experimentation stops feeling risky and starts feeling useful. You will still see inconclusive tests sometimes, and that is normal. Even so, every valid test teaches something. Over time, this approach compounds into smarter product choices, better marketing decisions, and a stronger culture of evidence.

To continue building your statistics foundation, explore Bayesian vs Frequentist Statistics, Understanding p-values, and Simpson’s Paradox in Real Data. Together, these topics make A/B testing best practices much easier to apply in the real world.

Further reading: You can also review the practical experimentation guidance at Optimizely, the analytics resources at Google Analytics Help, and statistical references from NIST.