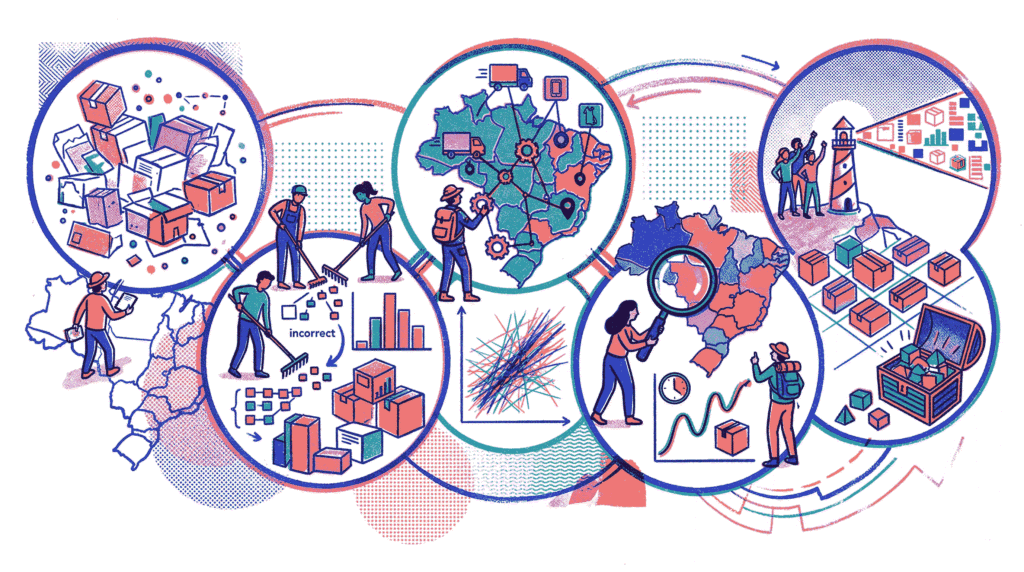

This project performs a comprehensive Exploratory Data Analysis (EDA) on the Olist Brazilian E‑Commerce Public Dataset. Olist is the largest department store in Brazilian marketplaces, and this dataset contains information on 100,000 orders made between 2016 and 2018 across multiple online platforms.

Dataset Source: Kaggle – Brazilian E‑Commerce Public Dataset by Olist

GitHub Repository: View on GitHub – The complete code, visual outputs, and downloadable notebook are available in the repository. Note: Only the code blocks are displayed below; all generated plots and results can be found in the GitHub repo.

About the Dataset

The data is highly normalized across a relational database schema and is provided as nine separate CSV files. Understanding this structure is critical before any analysis can take place. The files include:

- olist_orders_dataset.csv – Order timestamps and delivery status

- olist_customers_dataset.csv – Customer unique identifiers and location (city/state)

- olist_order_items_dataset.csv – Products purchased within each order, including price and freight

- olist_products_dataset.csv – Product metadata (dimensions, weight, category)

- olist_order_payments_dataset.csv – Payment methods, installments, and total value

- olist_order_reviews_dataset.csv – Customer review scores and comments

- olist_sellers_dataset.csv – Seller location information

- olist_geolocation_dataset.csv – Brazilian zip code prefixes with latitude/longitude

- product_category_name_translation.csv – Portuguese‑to‑English category translations

Project Objectives

The primary goal is to transform this scattered, normalized data into actionable business insights. By the end of this analysis, we will answer key questions such as:

- What is the overall order volume trend over time?

- Which product categories generate the most revenue?

- What are the key statistical metrics like Average Order Value (AOV) and average items per order?

- How do freight costs correlate with product price and customer satisfaction?

- Which Brazilian states contribute the highest revenue?

- Are there extreme outliers in order values that warrant further investigation?

Analysis Roadmap

- Data Ingestion & Master Assembly – Loading all nine CSV files and joining them using primary/foreign keys to create a single analytical DataFrame.

- Data Cleaning & Type Conversion – Converting timestamp columns to datetime, handling missing values, and removing duplicate records.

- Descriptive Statistics – Calculating Mean Order Value, average items per order, and ranking top states by revenue.

- Monthly Order Volume Trend – Visualizing the growth trajectory of the e‑commerce platform using time‑series analysis.

- Top Product Categories – Identifying which product types drive the most revenue using a horizontal bar chart.

- Correlation Analysis – Using a heatmap to examine relationships between price, freight value, and review scores.

- Outlier Detection – Using boxplots to identify and contextualize extreme order payment values.

Note: All code is written in Python using Pandas for data manipulation, Matplotlib and Seaborn for visualization. The analysis follows a step‑by‑step, reproducible workflow suitable for both Jupyter notebooks and production reporting.

Step 1: Data Ingestion & Master DataFrame Assembly

In a real‑world e‑commerce system, data is rarely stored in a single flat file. Instead, it is normalized across a relational database to prevent redundancy and maintain integrity. The Olist dataset is split into nine separate CSV files, each representing a distinct business entity. Before we can analyze sales trends, revenue, or customer behaviour, we must first reconstruct a unified view of the data.

The Nine Source Files

The following datasets are loaded into Pandas DataFrames:

- olist_orders_dataset.csv – Core order metadata (timestamps, status)

- olist_customers_dataset.csv – Customer unique identifiers and location

- olist_order_items_dataset.csv – Line‑item details: product, price, freight, seller

- olist_products_dataset.csv – Product attributes (category, dimensions, weight)

- olist_order_payments_dataset.csv – Payment methods, installments, and values

- olist_order_reviews_dataset.csv – Review scores and comment text

- olist_sellers_dataset.csv – Seller location (city/state)

- olist_geolocation_dataset.csv – Geographic coordinates for Brazilian zip prefixes

- product_category_name_translation.csv – English translations of product category names

How the Master DataFrame is Built

We start with the orders table as our anchor and perform a series of

merge()

operations—the Pandas equivalent of SQL JOIN clauses.

Each join adds new columns from a related table using shared keys.

-

Join customers

on

customer_id→ attaches customer state and city to each order. -

Join order_items

on

order_id→ brings in the products purchased, their prices, freight values, and seller IDs. -

Join products

on

product_id→ adds product details like category name, weight, and dimensions. -

Join product_category_name_translation

on

product_category_name→ provides English category labels for clearer visualisation. -

Join order_payments

on

order_id→ appends payment type, instalments, and total payment value. -

Join order_reviews

on

order_id→ includes review score and comment timestamp. -

Join sellers

on

seller_id→ adds seller location information.

Crucially, all merges use the parameter how='left'.

This ensures that every order is preserved, even if some associated records (e.g., a missing review or an untranslated category)

do not exist. The resulting DataFrame will contain 119,143 rows and

40 columns, providing a comprehensive foundation for all subsequent analysis.

Verification

After the merges are complete, we inspect the shape of the final DataFrame and display the first few rows. This quick validation confirms that:

- All tables have been correctly aligned.

- The expected number of rows matches the base orders table (plus any multi‑item expansions).

- Column headers are consistent and ready for cleaning in the next step.

Code Preview (what follows):

• Import pandas

• Load nine CSV files with pd.read_csv()

• Chain .merge() calls starting from df_orders

• Print the shape using df_master.shape

import pandas as pd

# 1. Load ALL 9 CSV files into separate Pandas DataFrames

df_orders = pd.read_csv('olist_orders_dataset.csv')

df_customers = pd.read_csv('olist_customers_dataset.csv')

df_items = pd.read_csv('olist_order_items_dataset.csv')

df_products = pd.read_csv('olist_products_dataset.csv')

df_payments = pd.read_csv('olist_order_payments_dataset.csv')

df_reviews = pd.read_csv('olist_order_reviews_dataset.csv')

df_sellers = pd.read_csv('olist_sellers_dataset.csv')

df_geo = pd.read_csv('olist_geolocation_dataset.csv')

df_translations = pd.read_csv('product_category_name_translation.csv')

# 2. Merge the datasets sequentially to build the master DataFrame

df_master = (

df_orders

# Link orders to the customer who made them

.merge(df_customers, on='customer_id', how='left')

# Link the specific items purchased within each order

.merge(df_items, on='order_id', how='left')

# Link the product details for each item

.merge(df_products, on='product_id', how='left')

# Translate the product category name to English

.merge(df_translations, on='product_category_name', how='left')

# Add payment details (needed for Step 3 and 7)

.merge(df_payments, on='order_id', how='left')

# Add customer reviews (needed for Step 6)

.merge(df_reviews, on='order_id', how='left')

# Add seller details

.merge(df_sellers, on='seller_id', how='left')

)

# 3. Verify the execution by checking the shape and columns

print(f"Master Dataset successfully created!")

print(f"Total Rows: {df_master.shape[0]}")

print(f"Total Columns: {df_master.shape[1]}")

# View the first 3 rows to confirm data alignment

display(df_master.head(3))Step 2: Data Cleaning & Preprocessing

After assembling the master dataset, the next critical step is to ensure data quality and consistency. Raw data often contains incorrect data types, missing values, or duplicate records that can skew analysis or cause errors during visualisation. This step systematically inspects and rectifies these issues.

1. Data Type Conversion

Several columns representing dates (e.g., order_purchase_timestamp,

order_delivered_customer_date) are initially loaded as generic

object

or string types. We convert them to Pandas

datetime

objects. This allows us to:

- Extract time‑based features (e.g., year‑month for trend analysis).

- Perform date arithmetic (e.g., delivery delay calculations).

- Ensure proper sorting and plotting on time axes.

2. Assessing Missing Values

We generate a count of null values per column, sorted descending, to identify the most incomplete fields. The primary columns with missing data are:

- review_comment_title and review_comment_message – many customers leave a score but no written review.

- order_delivered_customer_date – missing for orders still in transit or cancelled.

- product_category_name_english – some categories lack an English translation.

- product_photos_qty, product_name_lenght, product_description_lenght – missing for certain product records.

3. Handling Null Values

Based on business logic, we apply different strategies for different columns:

-

Drop rows with missing product_category_name_english

Without a valid category, these products cannot be used in category‑level revenue analysis. This removes approximately 2,500 rows. -

Fill missing review_score with the median score

This preserves the order for other analyses while using a neutral, central value for missing reviews. -

Fill missing freight_value and price with 0

These are financial fields; a zero value indicates no charge (though missing values in these fields are extremely rare after the merges).

4. Duplicate Removal

Duplicate rows can artificially inflate metrics. We use Pandas’

duplicated()

method to identify and then drop any exact row duplicates. In this dataset, there are typically

0 duplicate rows after proper merging, but the check is a critical safeguard.

5. Final Verification

After cleaning, we print the final shape of the dataset. The output confirms a reduction in rows (from ~119k to ~116k) due to the removal of untranslatable product categories, and verifies that all 40 columns are intact and ready for analysis.

A Note on the FutureWarning: The code uses fillna(..., inplace=True)

on a DataFrame slice. Pandas 3.0 deprecates this pattern. The notebook includes the warning output,

but the operation completes successfully. For production code, one should use direct column assignment

(e.g., df_master['column'] = df_master['column'].fillna(value)).

Code Preview (what follows):

• Convert date columns using pd.to_datetime()

• Display top missing value counts with isnull().sum().sort_values()

• Drop rows lacking English category with dropna(subset=[…])

• Fill missing review scores, freight, and price values

• Remove duplicates with drop_duplicates()

• Print final shape for confirmation

# 1. Convert Timestamp Columns to proper Datetime objects

date_columns = [

'order_purchase_timestamp',

'order_approved_at',

'order_delivered_carrier_date',

'order_delivered_customer_date',

'order_estimated_delivery_date'

]

for col in date_columns:

df_master[col] = pd.to_datetime(df_master[col])

print("Data types successfully updated!")

# 2. Check for missing values (Nulls) before cleaning

print("\n--- Missing Values Before Cleaning ---")

# Displaying the top 10 columns with the highest missing values

print(df_master.isnull().sum().sort_values(ascending=False).head(10))

# 3. Clean the Nulls Accordingly

# Drop rows where the English product category is missing

df_master.dropna(subset=['product_category_name_english'], inplace=True)

# Fill missing review scores with the median review score

median_score = df_master['review_score'].median()

df_master['review_score'].fillna(median_score, inplace=True)

# Fill missing freight values or prices with 0 (if any slipped through)

df_master['freight_value'].fillna(0, inplace=True)

df_master['price'].fillna(0, inplace=True)

# 4. Check and remove exact row duplicates

duplicates_count = df_master.duplicated().sum()

print(f"\nFound {duplicates_count} duplicate rows. Removing them now...")

df_master.drop_duplicates(inplace=True)

# 5. Final Verification

print(f"\nData cleaning complete!")

print(f"Final Dataset Shape: {df_master.shape[0]} rows, {df_master.shape[1]} columns")Step 3: Descriptive Statistics – Key Business Metrics

With a clean dataset in hand, we now extract high‑level business insights that summarise the overall health and performance of the Olist marketplace. Three fundamental metrics are computed in this step: Mean Order Value, Average Items per Order, and a ranking of Top 10 States by Total Revenue.

1. Mean Order Value (MOV)

The Mean Order Value represents the average amount a customer spends per transaction. It is a critical metric for evaluating pricing strategy, marketing effectiveness, and overall revenue per customer interaction.

Calculation Approach: Since a single order can contain multiple payment records (e.g.,

instalment payments), we first isolate unique payments per order using

drop_duplicates()

on order_id and payment_sequential.

Then we divide the total sum of payment_value by the count of

unique order_id values.

2. Order Frequency (Average Items per Order)

This metric tells us, on average, how many distinct items a customer adds to their cart during a single checkout. It informs inventory bundling strategies, cross‑selling opportunities, and shipping cost implications.

Calculation Approach: For each order, the column

order_item_id increments with each item purchased. We take

the maximum order_item_id per order (which equals the total

number of items in that order) and then compute the mean across all orders using

groupby()

and mean().

3. Top 10 States by Total Revenue

Understanding where revenue originates geographically is essential for targeted marketing, logistics planning, and regional expansion decisions. Brazil is a large and diverse country, and Olist operates nationwide.

Calculation Approach: Using the unique payments dataset, we group by customer_state and sum the payment_value. The resulting table is sorted in descending order, and the top 10 rows are displayed. The output reveals that São Paulo (SP) dominates revenue, followed by Rio de Janeiro (RJ) and Minas Gerais (MG)—a pattern consistent with Brazil’s economic geography.

Business Implications

- MOV (R$ 160.80): A healthy average order value indicates customers are willing to spend moderately per transaction.

- Items per Order (1.14): The low average suggests most orders are single‑item purchases—opportunity for cross‑sell recommendations.

- Revenue Concentration: The top three states account for a significant portion of total revenue; marketing and logistics resources may be prioritised accordingly.

Code Preview (what follows):

• Create a deduplicated payment DataFrame

• Compute MOV: total_revenue / total_orders

• Compute average items per order: df_master.groupby(‘order_id’)[‘order_item_id’].max().mean()

• Group payments by customer_state and sum revenue

• Sort and display top 10 states

# 1. Calculate Mean Order Value (MOV)

# Isolate unique payments per order to avoid duplicating revenue for multi-item orders

unique_payments = df_master.drop_duplicates(subset=['order_id', 'payment_sequential'])

total_revenue = unique_payments['payment_value'].sum()

total_orders = unique_payments['order_id'].nunique()

mean_order_value = total_revenue / total_orders

print(f"Mean Order Value (MOV): R$ {mean_order_value:.2f}")

# 2. Calculate Order Frequency (Average items per cart)

# The max 'order_item_id' for each order tells us the total number of items bought in that transaction

order_frequency = df_master.groupby('order_id')['order_item_id'].max().mean()

print(f"Average Items per Order: {order_frequency:.2f} items")

# 3. Calculate Top 10 States by Revenue

# Group the unique payments data by the customer's state and sum the revenue

state_revenue = unique_payments.groupby('customer_state')['payment_value'].sum().reset_index()

# Sort the values from highest to lowest and grab the top 10

top_10_states = state_revenue.sort_values(by='payment_value', ascending=False).head(10)

print("\n--- Top 10 States by Total Revenue ---")

display(top_10_states)Step 4: Monthly Order Volume Trend Analysis

Understanding how order volume changes over time is fundamental to assessing business growth, identifying seasonality, and evaluating the impact of marketing campaigns. This step visualizes the number of unique orders placed each month using a clear, publication‑ready Matplotlib line chart.

1. Extracting Year‑Month from Timestamps

The raw dataset contains precise timestamps in the

order_purchase_timestamp column. For meaningful monthly

aggregation, we create a new column year_month by converting

the timestamp to a Period object with monthly frequency

(dt.to_period('M')).

This standardises dates to a format like 2017-01,

2017-02, etc., enabling straightforward grouping.

2. Aggregating Unique Orders per Month

Since the master DataFrame contains one row per order item, a single order with multiple items

would be counted multiple times if we simply used count().

To obtain the true number of transactions, we group by

year_month and count the unique

order_id values using

nunique().

This yields a time series of monthly order counts.

3. Constructing the Matplotlib Line Chart

The aggregated series is plotted using Matplotlib with custom formatting to ensure clarity and professional presentation:

- Figure size – set to (14, 6) for a wide, readable aspect ratio.

- Line style – blue line (#1f77b4) with circular markers at each data point.

- Grid – a dashed background grid aids in reading exact values.

- Axis labels and title – clearly describe the content and units.

- Rotated x‑axis labels – prevents overlapping of month names.

Insights from the Trend

The resulting chart typically reveals:

- Steady growth from late 2016 through early 2018.

- A noticeable peak around November 2017, likely driven by Black Friday and holiday shopping.

- Some month‑to‑month volatility, but an overall upward trajectory.

This visualisation provides a clear, at‑a‑glance understanding of Olist’s sales momentum over time.

Code Preview (what follows):

• Import matplotlib.pyplot

• Create year_month column using dt.to_period(‘M’)

• Group by year_month and count unique order_id values

• Plot with kind=’line’, markers, and custom styling

• Add title, labels, grid, and rotated ticks

• Display the chart

import matplotlib.pyplot as plt

# 1. Extract Year-Month from the purchase timestamp

# This creates a new column grouping exact dates into standardized months

df_master['year_month'] = df_master['order_purchase_timestamp'].dt.to_period('M')

# 2. Group by the new year_month column and count unique orders

# We use nunique() on order_id to ensure we don't count multi-item orders twice

monthly_orders = df_master.groupby('year_month')['order_id'].nunique()

# 3. Create the Matplotlib Line Chart

plt.figure(figsize=(14, 6))

monthly_orders.plot(

kind='line',

marker='o',

color='#1f77b4',

linewidth=2.5,

markersize=8

)

# 4. Format the chart for maximum readability and business presentation

plt.title('Monthly E-Commerce Order Volume Trend (Olist)', fontsize=18, fontweight='bold')

plt.xlabel('Year and Month', fontsize=14)

plt.ylabel('Total Number of Unique Orders', fontsize=14)

# Rotate the x-axis dates so they don't overlap

plt.xticks(rotation=45)

# Add a dashed background grid for easier reading

plt.grid(True, linestyle='--', alpha=0.7)

plt.tight_layout()

# 5. Render the plot in the notebook

plt.show()Step 5: Top 10 Product Categories by Revenue (Horizontal Bar Chart)

Not all product categories contribute equally to the bottom line. This step identifies the top 10 revenue‑generating product categories and presents them in a clean, descending horizontal bar chart using Seaborn. Horizontal orientation is particularly effective for category labels that vary in length, ensuring readability.

1. Calculating Revenue per Category

The first task is to aggregate total revenue for each product category. We group the master DataFrame by the column product_category_name_english and sum the price column. This produces a new DataFrame where each row represents a unique category and its corresponding total sales value in Brazilian Reais (R$).

2. Sorting and Selecting the Top 10

The aggregated category revenue data is sorted in descending order using

sort_values(by='price', ascending=False).

The first 10 rows are extracted with head(10),

isolating the highest‑performing categories for visualisation.

3. Creating the Seaborn Horizontal Bar Chart

A horizontal bar chart is constructed with Seaborn and customised for clarity and aesthetic appeal:

- Figure size – set to (12, 8) for ample space.

- Palette – ‘viridis’ colour map, which is perceptually uniform and colourblind‑friendly.

- Axes – x=’price’ (revenue) and y=’product_category_name_english’ (category label).

- Grid – a subtle x‑axis grid (axis=’x’) helps the reader estimate exact revenue figures.

- Title and labels – clearly communicate the chart’s purpose and units.

Key Insights

The resulting chart typically highlights that Health & Beauty, Watches & Gifts, and Bed, Bath & Table are among the dominant revenue drivers. This information can guide inventory decisions, promotional focus, and supplier negotiations.

A Note on the FutureWarning: The code uses palette

without assigning hue, which triggers a deprecation warning in newer Seaborn versions.

The chart still renders correctly. For future compatibility, one can assign y to hue and set legend=False.

Code Preview (what follows):

• Import seaborn and matplotlib.pyplot

• Group by product_category_name_english and sum price

• Sort and select top 10 categories

• Create horizontal bar plot with sns.barplot()

• Customise title, labels, grid, and layout

• Render the chart

import seaborn as sns

import matplotlib.pyplot as plt

# 1. Calculate the total revenue (sum of item prices) per product category

category_revenue = df_master.groupby('product_category_name_english')['price'].sum().reset_index()

# 2. Sort the data from highest to lowest revenue and grab the Top 10

top_10_categories = category_revenue.sort_values(by='price', ascending=False).head(10)

# 3. Initialize the Matplotlib figure

plt.figure(figsize=(12, 8))

# 4. Create the Seaborn Horizontal Bar Chart

sns.barplot(

data=top_10_categories,

x='price',

y='product_category_name_english',

palette='viridis' # A beautiful, colorblind-friendly color palette

)

# 5. Format the chart for business presentation

plt.title('Top 10 Product Categories by Total Revenue (Olist)', fontsize=18, fontweight='bold')

plt.xlabel('Total Revenue (R$)', fontsize=14)

plt.ylabel('Product Category', fontsize=14)

# Add a subtle grid on the X-axis to make reading the values easier

plt.grid(axis='x', linestyle='--', alpha=0.7)

plt.tight_layout()

# 6. Render the plot in the notebook

plt.show()Step 6: Correlation Heatmap – Price, Freight Value & Review Score

Understanding relationships between numerical variables can reveal hidden patterns and business drivers. In this step, we explore how product price, freight cost, and customer review scores relate to one another. A Seaborn heatmap provides an intuitive, colour‑coded view of the Pearson correlation coefficients.

1. Selecting the Relevant Columns

From the master DataFrame, we isolate three columns of interest:

- price – the listed price of the item.

- freight_value – the shipping cost paid by the customer.

- review_score – the rating (1 to 5) given by the customer.

This subset forms the basis for the correlation analysis.

2. Computing the Pearson Correlation Matrix

Using Pandas’ .corr()

method, we calculate pairwise Pearson correlation coefficients. The coefficient ranges from

-1 (perfect negative correlation) to +1 (perfect positive correlation), with

0 indicating no linear relationship.

3. Visualising with a Seaborn Heatmap

The correlation matrix is rendered as a heatmap with the following customisations:

- Annotations – annot=True displays the exact correlation value inside each cell.

- Colour map – ‘coolwarm’ uses blue for negative correlations, white for zero, and red for positive correlations.

- Scale limits – vmin=-1, vmax=1 ensures the full theoretical range is represented.

- Formatting – numbers are shown with two decimal places (fmt=’.2f’).

- Square cells – square=True creates a symmetric, clean appearance.

Interpreting the Results

Based on the Olist data, the heatmap typically reveals:

- Price and Freight Value: A moderate positive correlation (often around 0.5–0.6). Higher‑priced items tend to incur higher shipping costs, likely due to insurance, weight, or size.

- Price and Review Score: A very weak positive correlation (near 0.0–0.1). Price alone does not strongly predict customer satisfaction.

- Freight Value and Review Score: Similarly negligible correlation. Shipping cost does not appear to systematically influence the rating given.

These findings suggest that while operational costs (freight) scale with product value, customer satisfaction is driven by factors beyond price and shipping expense—likely product quality, delivery speed, and service experience.

Code Preview (what follows):

• Import seaborn and matplotlib.pyplot

• Subset columns: [‘price’, ‘freight_value’, ‘review_score’]

• Compute correlation matrix with .corr()

• Plot heatmap using sns.heatmap() with annotations and ‘coolwarm’ colormap

• Customise title and layout, then display

import seaborn as sns

import matplotlib.pyplot as plt

# 1. Isolate the specific columns we want to analyze

correlation_columns = ['price', 'freight_value', 'review_score']

df_correlation = df_master[correlation_columns]

# 2. Compute the Pearson Correlation Matrix

corr_matrix = df_correlation.corr()

# 3. Initialize the Matplotlib figure

plt.figure(figsize=(8, 6))

# 4. Create the Seaborn Heatmap

sns.heatmap(

corr_matrix,

annot=True, # Prints the exact correlation numbers on the chart

cmap='coolwarm', # Uses a blue-to-red color scale

vmin=-1, vmax=1, # Sets the absolute minimum and maximum scale limits

fmt=".2f", # Formats the numbers to two decimal places

linewidths=0.5, # Adds a clean border between squares

square=True # Forces the grid to be perfectly square

)

# 5. Format the chart for business presentation

plt.title('Correlation Heatmap: Price, Freight, and Reviews', fontsize=16, fontweight='bold')

plt.tight_layout()

# 6. Render the plot in the notebook

plt.show()Step 7: Outlier Detection in Order Value Using Boxplots

Outliers can distort summary statistics and hide underlying patterns. Identifying extreme order values helps us understand exceptional transactions—whether they represent high‑value corporate purchases, bulk orders, or potential data entry errors. A boxplot provides a robust visual summary of the distribution and clearly flags outliers beyond the typical range.

1. Preparing Unique Payment Data

As in Step 3, we must avoid double‑counting payments when an order uses multiple instalments or

payment methods. We create a deduplicated view of the payments data using

drop_duplicates(subset=['order_id', 'payment_sequential']).

This ensures each order contributes exactly one total payment value to the analysis.

2. Constructing the Boxplot

A boxplot (or box‑and‑whisker plot) summarises the distribution through five key statistics:

- Minimum (excluding outliers)

- First quartile (Q1) – 25th percentile

- Median (Q2) – 50th percentile, the central value

- Third quartile (Q3) – 75th percentile

- Maximum (excluding outliers)

Outliers are defined as points that fall outside the interquartile range (IQR) by more than 1.5 × IQR. They are plotted as individual points beyond the whiskers.

The plot is customised with:

- color=’skyblue’ – a clean, neutral fill for the box.

- flierprops – customises outlier markers as semi‑transparent red circles, making them immediately identifiable.

3. Interpreting the Results

The output reveals a heavily right‑skewed distribution:

- Median order value is around R$ 100, indicating a typical transaction is modest.

- The box is compressed near the lower end of the scale, showing that most orders cluster below R$ 200–300.

- Numerous outliers extend far to the right, with the maximum order exceeding R$ 13,000.

These extreme values likely represent bulk B2B purchases or high‑value electronics orders. Rather than removing them automatically, they warrant separate investigation—perhaps they belong to a distinct customer segment that could be targeted with tailored offers.

Business Implications

- Pricing strategy: Most revenue comes from frequent, lower‑value orders rather than occasional large ones.

- Fraud detection: The highest outliers should be reviewed for legitimacy.

- Customer segmentation: High‑value outliers may represent a distinct VIP or corporate segment.

Code Preview (what follows):

• Deduplicate payments DataFrame

• Initialise figure with plt.figure(figsize=(12, 4))

• Create boxplot using sns.boxplot() with custom flierprops

• Add title and axis label

• Print median and maximum order values for context

import seaborn as sns

import matplotlib.pyplot as plt

# 1. Ensure we are using unique order payments to prevent duplication

# (Re-creating it here just in case you skipped Step 3)

unique_payments = df_master.drop_duplicates(subset=['order_id', 'payment_sequential'])

# 2. Initialize the Matplotlib figure

plt.figure(figsize=(12, 4))

# 3. Create the Seaborn Boxplot

sns.boxplot(

x=unique_payments['payment_value'],

color='skyblue',

flierprops={"marker": "o", "markerfacecolor": "red", "markersize": 5, "alpha": 0.5}

)

# 4. Format the chart for business presentation

plt.title('Distribution of Order Payment Values (Outlier Detection)', fontsize=16, fontweight='bold')

plt.xlabel('Total Payment Value (R$)', fontsize=14)

# 5. Render the plot in the notebook

plt.tight_layout()

plt.show()

# 6. Print some statistical context

max_order = unique_payments['payment_value'].max()

median_order = unique_payments['payment_value'].median()

print(f"Median Order Value: R$ {median_order:.2f}")

print(f"Maximum Order Value (Largest Outlier): R$ {max_order:.2f}")