Understanding Multimodal Learning: The Convergence of Senses

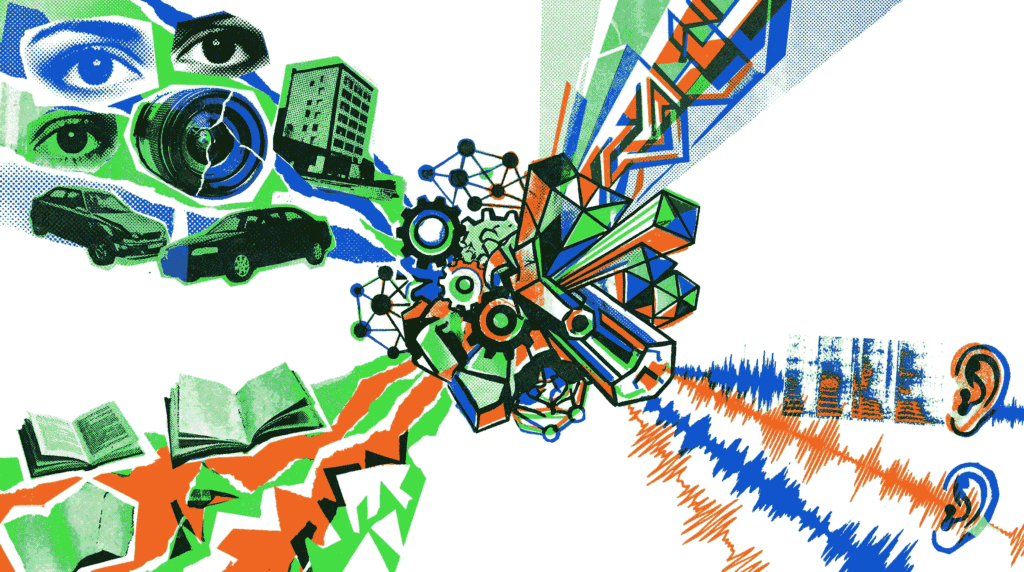

In the natural world, humans perceive and understand their environment through multiple senses simultaneously—sight, sound, touch, and language. For artificial intelligence to approach human‑like comprehension, it must similarly integrate information from diverse sources. This is precisely the goal of multimodal learning: a subfield of machine learning dedicated to building models that can process and relate data from different modalities, such as text, images, and audio. Rather than treating each type of input in isolation, multimodal learning aims to create a unified representation that captures the rich, complementary relationships between them. Consequently, applications ranging from advanced image search to sophisticated virtual assistants are being transformed.

What Exactly Is Multimodal Learning?

At its core, multimodal learning refers to the process of training machine learning models on data that comes from two or more distinct modalities. A modality can be any form of sensory or conceptual input: textual descriptions, visual pixels, audio spectrograms, depth sensor readings, or even tabular metadata. The key challenge lies in bridging the “modality gap”—the fundamental differences in how information is represented across these formats. For instance, an image is a 2D grid of pixel intensities, while text is a sequence of discrete tokens. Multimodal learning algorithms, therefore, learn to project these heterogeneous inputs into a shared embedding space where semantically similar concepts (e.g., a photo of a dog and the word “dog”) lie close together.

This approach is a natural extension of techniques discussed in our article on Chain‑of‑Thought prompting, which also emphasizes deeper reasoning. While CoT focuses on logical steps within a single text modality, multimodal learning expands the horizon to multiple input types.

Why Is Multimodal Learning Important?

The drive toward multimodal learning is fueled by both practical necessity and the pursuit of more robust AI. First, the world around us is inherently multimodal; a self‑driving car must fuse camera feeds, LiDAR points, and radar signals to navigate safely. Second, multiple modalities often provide complementary information. A silent video of a bustling street conveys motion and spatial layout, but adding the audio track reveals honking horns and conversations, enriching the scene’s context. Third, multimodal learning can improve robustness: if one modality is noisy or missing (e.g., poor lighting in an image), the model can still rely on others (e.g., accompanying text or audio) to make accurate predictions.

Moreover, these systems unlock entirely new capabilities that are impossible with unimodal models. Tasks like text‑to‑image generation, visual question answering (VQA), and automated video captioning all depend on the tight integration of language and vision. As a result, multimodal learning has become a cornerstone of generative AI platforms such as OpenAI’s GPT‑4V and Google’s Gemini.

Core Techniques in Multimodal Learning

Building effective multimodal models requires specialized architectures and training objectives. Below are the most prominent techniques driving progress in multimodal learning.

1. Early, Intermediate, and Late Fusion

A fundamental design choice is when to combine information from different modalities. Early fusion concatenates raw features (e.g., pixel values and word embeddings) at the input layer before feeding them into a single neural network. Conversely, late fusion processes each modality independently with separate encoders and merges their outputs only at the decision stage (e.g., averaging prediction scores). A more flexible alternative is intermediate fusion, where features are mixed across modalities at multiple layers of a deep network, allowing complex cross‑modal interactions. The optimal strategy depends heavily on the task and the degree of alignment between modalities.

2. Contrastive Learning (e.g., CLIP)

One of the most influential breakthroughs in recent years is contrastive pre‑training, exemplified by OpenAI’s CLIP (Contrastive Language–Image Pre‑training). In this approach, a model is trained on millions of (image, text) pairs to maximize the similarity between correct pairings while minimizing it for incorrect ones. As a result, CLIP learns a shared embedding space where, for example, an image of a sunset maps close to the text “a beautiful sunset.” This enables zero‑shot classification—the model can recognize objects it was never explicitly trained on, simply by comparing image embeddings to text descriptions of categories.

3. Cross-Attention Mechanisms

Transformer architectures have revolutionized multimodal learning through cross‑attention layers. In a standard transformer, self‑attention allows tokens within a single modality to interact. Cross‑attention extends this by letting tokens from one modality attend to tokens from another. For instance, in a visual question answering system, the text tokens representing the question can attend to specific regions of the image features. This mechanism provides a fine‑grained, dynamic fusion that is highly effective for tasks requiring detailed alignment, such as grounding phrases to image regions.

4. Generative Multimodal Models

Beyond understanding, multimodal learning also powers generation. Models like DALL·E, Midjourney, and Stable Diffusion take textual prompts and synthesize novel images. Similarly, systems such as AudioLDM generate audio clips from text descriptions. These generative models typically use diffusion processes or autoregressive transformers conditioned on text embeddings. The ability to create across modalities is opening new frontiers in creative tools, content creation, and design.

Key Applications of Multimodal Learning

The impact of multimodal learning is already visible across numerous industries and research domains. Here are several prominent applications:

- Visual Question Answering (VQA): Given an image and a natural language question (e.g., “What color is the car?”), the model must reason over both modalities to produce an answer. This has implications for accessibility tools that describe scenes to visually impaired users.

- Image and Video Captioning: Automatically generating descriptive text for visual content. This is invaluable for search engine indexing, social media accessibility, and video summarization.

- Text‑to‑Image and Text‑to‑Video Generation: Platforms like DALL·E 3 and RunwayML allow users to create visual media from written prompts, democratizing creative expression.

- Speech Recognition with Visual Context: Lip reading combined with audio signals improves speech recognition accuracy in noisy environments—a technique used in advanced hearing aids and meeting transcription tools.

- Multimodal Sentiment Analysis: By analyzing facial expressions (video), tone of voice (audio), and spoken words (text), models can gauge human emotion more accurately than from text alone. This is powerful for customer service analytics and mental health monitoring.

- Robotics and Embodied AI: Robots must fuse visual perception, language instructions, and tactile feedback to manipulate objects and navigate complex environments.

Challenges in Multimodal Learning

Despite impressive progress, multimodal learning faces several significant hurdles. One major challenge is data alignment and synchronization. In many real‑world datasets, the correspondence between modalities is noisy or only loosely aligned (e.g., social media posts where an image may not perfectly match the caption). Furthermore, missing modalities during inference—such as a video without audio—require models that are robust to incomplete inputs.

Another obstacle is computational cost. Processing high‑dimensional data like video and audio streams demands substantial memory and processing power, making training and deployment expensive. Additionally, modality bias can occur, where the model over‑relies on one dominant modality (often text) and fails to leverage others effectively. Researchers are actively developing techniques like modality dropout and balanced sampling to mitigate these issues.

Finally, evaluating multimodal systems is inherently complex. Standard metrics like accuracy for VQA or FID for image generation capture only part of the picture. New benchmarks, such as MMMU (Massive Multi‑discipline Multimodal Understanding), are pushing the field toward more holistic assessment.

The Future of Multimodal Learning

Looking ahead, multimodal learning is poised to become even more integrated and seamless. We are likely to see the rise of any‑to‑any multimodal models—unified architectures that can accept and generate any combination of text, images, audio, and video. Projects like Google’s Gemini and Meta’s ImageBind are early steps in this direction, demonstrating the ability to reason across six modalities simultaneously. For a deeper dive into how reasoning frameworks enhance such capabilities, check out our post on the Tree‑of‑Thought framework.

Moreover, the combination of multimodal learning with embodied agents will bring us closer to truly interactive AI assistants that perceive the world as we do. As models become more efficient and datasets more comprehensive, these technologies will transition from research labs into everyday applications—from personalized education tutors that understand both diagrams and explanations, to healthcare diagnostics that synthesize medical images, patient history, and genomic data. The journey of bridging text, image, and audio inputs has only just begun, and its trajectory promises a future where AI understands our world in all its rich, sensory complexity.

Conclusion

In summary, multimodal learning is revolutionizing artificial intelligence by enabling models to fuse information from text, images, and audio into cohesive, context‑aware representations. Through techniques like contrastive pre‑training, cross‑attention, and thoughtful fusion strategies, these systems are achieving remarkable feats—from describing the visual world in natural language to generating creative content across media types. While challenges in data alignment, computational cost, and evaluation remain, the rapid pace of innovation suggests that truly integrated AI is on the horizon. As developers, researchers, and enthusiasts, understanding and leveraging multimodal learning will be essential to building the next generation of intelligent applications.

Further Reading: Dive deeper into reasoning with our articles on Chain‑of‑Thought Prompting and the Tree‑of‑Thought Framework. Explore the research behind CLIP at OpenAI and stay updated with the latest multimodal benchmarks at arXiv.