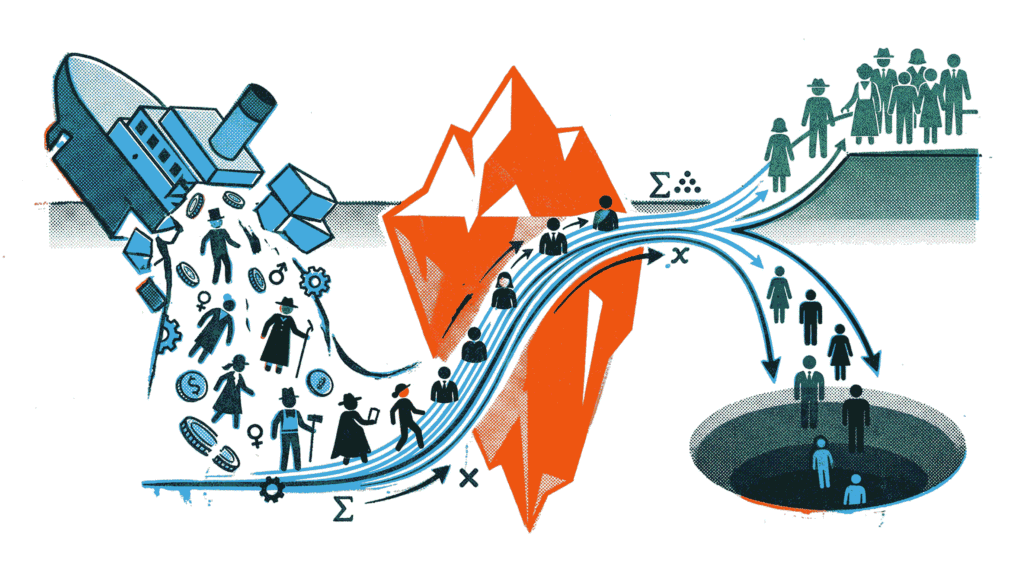

This project builds a logistic regression model to predict passenger survival on the RMS Titanic. Using the classic Kaggle Titanic dataset, we go through a complete classification workflow: data cleaning, feature engineering, model training, and evaluation with precision, recall, F1‑score, confusion matrix, and ROC curve.

Dataset Source: Kaggle – Titanic: Machine Learning from Disaster

GitHub Repository: View on GitHub – The complete code, visual outputs, and downloadable notebook are available in the repository. Note: Only the code blocks are displayed below; all generated plots and results can be found in the GitHub repo.

About the Dataset

The training set contains 891 passengers with labels indicating survival (1 = survived, 0 = deceased) and features such as passenger class, name, sex, age, siblings/spouses aboard, parents/children aboard, ticket number, fare, cabin, and port of embarkation. The dataset includes missing values that require cleaning before modeling.

Project Objectives

- Handle missing data and engineer meaningful features (Title, FamilySize).

- Train a logistic regression classifier to predict survival.

- Evaluate model performance using precision, recall, F1‑score, and confusion matrix.

- Visualise the ROC curve and compute AUC to assess discriminative power.

- Compare the model against a dummy baseline classifier.

Analysis Roadmap

- Data Loading & Missing Value Exploration – Load train.csv and inspect missing values.

- Handling Missing Data – Impute Age with median, fill Embarked with mode, drop Cabin.

- Feature Engineering – Extract Title from Name and create FamilySize.

- Encoding & Column Dropping – Encode categorical variables and remove unused columns.

- Train/Test Split & Model Fitting – Split 80/20 and train logistic regression.

- Classification Report – Print precision, recall, and F1 for both classes.

- Confusion Matrix Heatmap – Visualise true/false positives and negatives.

- ROC Curve & AUC Score – Plot ROC curve and compare with dummy baseline.

Note: All code is written in Python using Pandas, scikit‑learn, Matplotlib, and Seaborn.

Step 1: Data Loading & Missing Value Exploration

The first step loads the training data from train.csv and examines which columns contain missing values. This informs our subsequent cleaning strategy.

What the Code Does

- Load the CSV file using pd.read_csv().

- Call df.isnull().sum() to count missing entries per column.

- Output reveals that Age has 177 missing values, Cabin has 687, and Embarked has 2.

Code Preview (what follows):

• Import pandas and numpy

• df = pd.read_csv(‘train.csv’)

• Print df.isnull().sum()

import pandas as pd

import numpy as np

# Load the training dataset

df = pd.read_csv('train.csv')

# Explore missing values

print("Missing values per column:")

print(df.isnull().sum())Step 2: Handling Missing Data (Impute and Drop)

Based on the missing value analysis, we apply appropriate imputation strategies. Age is filled with the median, Embarked with the most frequent value (mode), and the largely incomplete Cabin column is dropped entirely.

What the Code Does

- df[‘Age’].fillna(df[‘Age’].median(), inplace=True)

- df[‘Embarked’].fillna(df[‘Embarked’].mode()[0], inplace=True)

- df.drop(columns=[‘Cabin’], inplace=True)

- After cleaning, df.isnull().sum() confirms zero missing values.

Code Preview (what follows):

• Impute Age with median

• Fill Embarked with mode

• Drop Cabin column

• Print missing values after cleaning

# Impute Age with the median

df['Age'] = df['Age'].fillna(df['Age'].median())

# Fill Embarked with the mode

mode_embarked = df['Embarked'].mode()[0]

df['Embarked'] = df['Embarked'].fillna(mode_embarked)

# Drop the Cabin column entirely

df = df.drop(columns=['Cabin'])

print("Missing values after cleaning:")

print(df.isnull().sum())Step 3: Feature Engineering

Creating new features from existing data can significantly improve model performance. We extract the Title (Mr, Mrs, Miss, Master, etc.) from the passenger’s name and combine SibSp and Parch to form FamilySize.

What the Code Does

- Extract Title using regex: df[‘Name’].str.extract(r’ ([A-Za-z]+)\.’, expand=False)

- Group rare titles (Lady, Countess, Capt, Col, Dr, etc.) into a single ‘Rare’ category.

- Standardise similar titles: Mlle/Ms → Miss, Mme → Mrs.

- Create FamilySize = SibSp + Parch + 1.

Code Preview (what follows):

• Extract Title with regex

• Replace rare titles

• Compute FamilySize

• Display sample of new features

# Extract Title from Name (using regex to pull the word before the period)

df['Title'] = df['Name'].str.extract(r' ([A-Za-z]+)\.', expand=False)

# Group rare titles together to avoid sparse columns

rare_titles = ['Lady', 'Countess','Capt', 'Col', 'Don', 'Dr', 'Major', 'Rev', 'Sir', 'Jonkheer', 'Dona']

df['Title'] = df['Title'].replace(rare_titles, 'Rare')

df['Title'] = df['Title'].replace(['Mlle', 'Ms'], 'Miss')

df['Title'] = df['Title'].replace('Mme', 'Mrs')

# Create FamilySize (SibSp + Parch + 1 for the passenger themselves)

df['FamilySize'] = df['SibSp'] + df['Parch'] + 1

print("Sample of newly engineered features:")

print(df[['Name', 'Title', 'FamilySize']].head())Step 4: Encoding & Dropping Unneeded Columns

Machine learning models require numeric input. We encode categorical variables and drop columns that are no longer useful (e.g., raw name, ticket, passenger ID).

What the Code Does

- Encode Sex: female → 1, male → 0.

- One‑hot encode Embarked and Title using pd.get_dummies() with drop_first=True to avoid multicollinearity.

- Drop Name, Ticket, and PassengerId columns.

- Print the final shape and column list to verify readiness for modeling.

Code Preview (what follows):

• Map Sex to 0/1

• pd.get_dummies() on Embarked and Title

• Drop Name, Ticket, PassengerId

• Print shape and columns

# Encode Sex: female -> 1, male -> 0

df['Sex'] = df['Sex'].map({'male': 0, 'female': 1})

# One-hot encode categorical features (Embarked and Title)

df = pd.get_dummies(df, columns=['Embarked', 'Title'], drop_first=True)

# Drop raw categorical columns that are no longer needed

df = df.drop(columns=['Name', 'Ticket', 'PassengerId'])

print("Current dataset shape ready for modeling:", df.shape)

print("Columns:", df.columns.tolist())Step 5: Train/Test Split & Model Fitting

We split the data into training (80%) and testing (20%) sets using a fixed random seed for reproducibility. A logistic regression model is then trained on the training data.

What the Code Does

- Define features X (all columns except Survived) and target y (Survived).

- Split using train_test_split(X, y, test_size=0.2, random_state=42).

- Instantiate LogisticRegression(max_iter=1000) and fit with .fit(X_train, y_train).

- Print confirmation message upon successful training.

Code Preview (what follows):

• Import train_test_split, LogisticRegression

• Define X and y

• Split data 80/20

• Fit logistic regression model

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

# Define features (X) and target (y)

X = df.drop(columns=['Survived'])

y = df['Survived']

# Split the data 80/20

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# Initialize and fit the Logistic Regression model

# max_iter is set to 1000 to ensure the gradient descent converges

model = LogisticRegression(max_iter=1000)

model.fit(X_train, y_train)

print("Logistic Regression model trained successfully!")Step 6: Classification Report

After making predictions on the test set, we generate a classification report that shows precision, recall, and F1‑score for both classes (survived and not survived), along with overall accuracy.

What the Code Does

- Predict test labels: y_pred = model.predict(X_test).

- Use classification_report() from sklearn.metrics.

- Print the report with custom target names for readability.

Code Preview (what follows):

• Import classification_report

• y_pred = model.predict(X_test)

• Print classification report

from sklearn.metrics import classification_report

# Predict on the test set

y_pred = model.predict(X_test)

# Print the classification report

print("Classification Report:")

print(classification_report(y_test, y_pred, target_names=['Not Survived (0)', 'Survived (1)']))Step 7: Confusion Matrix Heatmap

A confusion matrix provides a detailed breakdown of correct and incorrect predictions. We visualise it as a heatmap to easily identify false positives (predicted survived but did not) and false negatives (predicted deceased but survived).

What the Code Does

- Compute confusion matrix using confusion_matrix(y_test, y_pred).

- Plot with sns.heatmap(), annotating each cell with counts.

- Add descriptive labels for axes and title.

Code Preview (what follows):

• Import confusion_matrix, seaborn, matplotlib.pyplot

• Compute confusion matrix

• Plot heatmap with annotations

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.metrics import confusion_matrix

# Compute the confusion matrix

cm = confusion_matrix(y_test, y_pred)

# Plot as a heatmap

plt.figure(figsize=(6, 4))

sns.heatmap(cm, annot=True, fmt='d', cmap='Blues',

xticklabels=['Predicted: Dead', 'Predicted: Survived'],

yticklabels=['Actual: Dead', 'Actual: Survived'])

plt.title('Confusion Matrix')

plt.ylabel('True Label')

plt.xlabel('Predicted Label')

plt.show()Step 8: ROC Curve & AUC Score

The ROC (Receiver Operating Characteristic) curve plots the true positive rate against the false positive rate at various threshold settings. The Area Under the Curve (AUC) summarises the model’s ability to discriminate between classes. A dummy baseline (predicting the most frequent class) is included for comparison.

What the Code Does

- Obtain predicted probabilities for the positive class with model.predict_proba(X_test)[:, 1].

- Create a dummy classifier using DummyClassifier(strategy=’most_frequent’) and get its probabilities.

- Compute FPR, TPR, and AUC for both the logistic regression model and the dummy baseline using roc_curve() and auc().

- Plot both ROC curves along with the random chance diagonal.

Code Preview (what follows):

• Import roc_curve, auc, DummyClassifier

• Get probabilities for logistic regression and dummy

• Compute ROC curves

• Plot with labels and AUC values

from sklearn.metrics import roc_curve, auc

from sklearn.dummy import DummyClassifier

# Get probability scores for the positive class (Survived = 1)

y_prob = model.predict_proba(X_test)[:, 1]

# Create a baseline "Dummy" classifier that predicts the most frequent class

dummy_clf = DummyClassifier(strategy="most_frequent")

dummy_clf.fit(X_train, y_train)

y_dummy_prob = dummy_clf.predict_proba(X_test)[:, 1]

# Calculate False Positive Rate (FPR) and True Positive Rate (TPR)

fpr, tpr, _ = roc_curve(y_test, y_prob)

roc_auc = auc(fpr, tpr)

fpr_dummy, tpr_dummy, _ = roc_curve(y_test, y_dummy_prob)

roc_auc_dummy = auc(fpr_dummy, tpr_dummy)

# Plot the ROC Curve

plt.figure(figsize=(8, 6))

plt.plot(fpr, tpr, color='darkorange', lw=2, label=f'Logistic Regression (AUC = {roc_auc:.2f})')

plt.plot(fpr_dummy, tpr_dummy, color='navy', lw=2, linestyle='--', label=f'Dummy Baseline (AUC = {roc_auc_dummy:.2f})')

# Plot the diagonal line representing random chance

plt.plot([0, 1], [0, 1], color='gray', lw=1, linestyle='--')

plt.xlim([0.0, 1.0])

plt.ylim([0.0, 1.05])

plt.xlabel('False Positive Rate (FPR)')

plt.ylabel('True Positive Rate (TPR)')

plt.title('Receiver Operating Characteristic (ROC) Curve')

plt.legend(loc="lower right")

plt.show()