Beyond Text: Letting Language Models Write and Run Code

Large language models (LLMs) have demonstrated remarkable abilities in generating human‑like text, summarizing documents, and even crafting poetry. Yet, when faced with complex reasoning tasks—especially those involving multi‑step arithmetic, symbolic manipulation, or algorithmic logic—their performance often falters. They may produce plausible‑sounding but incorrect answers. This is where Program-Aided Language Models (PAL) step in. Instead of forcing the LLM to compute the final answer internally, PAL prompts the model to generate a program—typically Python code—that can be executed by an external interpreter to produce the correct result. By offloading computation to a deterministic runtime, Program-Aided Language Models achieve significantly higher accuracy on benchmarks like GSM8K and SVAMP. In this article, we will explore the PAL methodology, its workflow, and why it represents a powerful evolution in prompt engineering.

The Limitation of Pure Text Reasoning

Before diving into PAL, it’s helpful to understand the problem it solves. Traditional approaches like Chain‑of‑Thought (CoT) prompting encourage models to verbalize their reasoning step‑by‑step in natural language. For instance, when asked “If Roger has 5 balls and buys 2 cans of 3 balls each, how many balls does he have?”, a CoT prompt guides the model to output “Roger started with 5. 2 cans × 3 balls = 6. 5 + 6 = 11.” This technique improves accuracy substantially. However, it still relies on the language model to perform arithmetic calculations within its own parameters—a task for which transformers are not natively optimized. Consequently, errors in multi‑step calculations remain common, especially with larger numbers or more complex expressions.

Another limitation is that CoT reasoning is confined to linear, sequential thought. It struggles with tasks that require iteration, conditionals, or variable state. This is precisely where Program-Aided Language Models offer a breakthrough. By generating executable code instead of plain reasoning steps, PAL leverages the language model’s strength in understanding and structuring a problem, while delegating the actual computation to a specialized, deterministic interpreter.

What Are Program-Aided Language Models (PAL)?

Program-Aided Language Models (PAL) is a prompting technique introduced by researchers from Carnegie Mellon University and Google Research in 2022. The core idea is simple yet powerful: when presented with a reasoning problem, the LLM is prompted to generate Python code that solves the problem, rather than outputting the final answer directly. This generated code is then executed in a Python interpreter, and the result of that execution is taken as the final answer. The model is never asked to perform arithmetic; it only needs to translate the word problem into a correct programmatic representation.

Consider the following example. Given the problem:

“Olivia has $23. She bought five bagels for $3 each. How much money does she have left?”

A Program-Aided Language Model would generate output similar to:

# Python code

money_initial = 23

bagels_count = 5

bagel_cost = 3

money_spent = bagels_count * bagel_cost

money_left = money_initial - money_spent

result = money_left

The code is then executed in a sandboxed environment, and the value of result (8) is returned as the final answer. Notice that the model did not compute 23 – 15; it merely expressed the relationship symbolically. This separation of reasoning and computation is the key to PAL’s accuracy.

The PAL Workflow: Step by Step

Implementing Program-Aided Language Models involves a well‑defined pipeline. Let’s break down each stage of the process.

1. Prompt Construction

The prompt given to the LLM includes few‑shot examples that demonstrate the desired behavior. Each example consists of a natural language problem statement followed by a Python code block that solves it. The final query is presented similarly, with an instruction to write Python code. The prompt explicitly tells the model to assign the final answer to a variable named result.

2. Code Generation

The LLM processes the prompt and generates a completion. Because the examples demonstrate Python syntax, the model is likely to produce syntactically correct code. However, the generation is probabilistic, so the output must be parsed to extract the code block. Techniques like using specific markers (e.g., “`python … “`) or prompting for a specific format help isolate the executable portion.

3. Secure Code Execution

Executing code generated by an untrusted model carries inherent security risks. A malicious or poorly formed prompt could cause the model to generate code that deletes files, accesses the network, or consumes excessive resources. Therefore, it is critical to run the code in a strictly sandboxed environment. Tools like Docker containers, restricted Python sub‑interpreters, or specialized services like E2B are commonly used. The sandbox should limit execution time, memory, and disable dangerous modules like os and subprocess.

4. Result Extraction

After the code runs successfully, the value of the designated variable (result) is retrieved from the execution environment. This value is then formatted and returned to the user as the final answer. If the code execution fails due to a syntax error or runtime exception, the system can fall back to a traditional CoT approach or prompt the model again with the error message.

Why PAL Outperforms Chain‑of‑Thought on Arithmetic

The superiority of Program-Aided Language Models on numerical reasoning benchmarks is striking. On the GSM8K dataset (grade school math word problems), PAL achieved an accuracy of around 72% using the Codex model, compared to approximately 60% for CoT prompting with the same model. This gap widens on datasets like SVAMP, which are designed to be more challenging. The improvement stems from two primary factors.

First, the Python interpreter performs arithmetic with perfect precision, eliminating calculation errors that plague LLMs. Second, and perhaps more importantly, the act of translating a word problem into code forces a deeper semantic understanding. The model must correctly identify variables, relationships, and the sequence of operations. This structured representation acts as a form of regularization, guiding the model away from plausible‑sounding but logically flawed reasoning paths.

Moreover, PAL naturally handles tasks that are cumbersome in pure text. For example, problems involving repetitive operations (e.g., calculating compound interest over 10 years) can be solved with a simple loop in code. In a CoT prompt, the model would need to generate the calculation for each year sequentially, increasing the chance of an error at any step. For a deeper look at how structured reasoning enhances LLM performance, see our article on the Tree‑of‑Thought framework.

Applications Beyond Arithmetic

While arithmetic word problems are the canonical use case, the potential of Program-Aided Language Models extends much further. Any task that can be solved algorithmically is a candidate. Consider these applications:

- Date and Time Calculations: “What day of the week is July 4, 2026?” The model can generate Python code using the datetime module to compute the answer reliably.

- Unit Conversions and Financial Math: Converting between currencies, calculating mortgage payments, or determining the final price after a series of percentage discounts are all tasks well‑suited for code execution.

- Data Analysis and Filtering: Given a small dataset embedded in the prompt (e.g., a JSON list of sales figures), the model can write a Python script using pandas or built‑in functions to filter, aggregate, or find the maximum value.

- Symbolic Math and Algebra: With access to the sympy library, PAL can solve equations, simplify expressions, or compute derivatives.

- API Orchestration Logic: The model can generate the logic for chaining API calls, including handling pagination or conditional requests, which can then be executed by an orchestration engine.

Challenges and Limitations of PAL

Despite its power, Program-Aided Language Models are not a panacea. Several practical challenges must be addressed.

- Syntax and Semantic Errors: The generated code is not guaranteed to be syntactically correct. The model might miss a colon, misuse indentation, or call a non‑existent function. Robust error handling and retry logic are essential.

- Security Concerns: As mentioned earlier, executing arbitrary code is dangerous. Sandboxing is mandatory, and careful consideration must be given to what Python modules and system calls are allowed.

- State and Context Management: For multi‑turn interactions, the code execution environment must be stateful or the model must regenerate the entire program. Managing state across multiple queries adds complexity.

- Ambiguity in Problem Statements: Some word problems contain implicit assumptions that are difficult to translate into precise code. The model may misinterpret the intended order of operations or fail to account for edge cases.

- Cost and Latency: Generating code and executing it in a sandbox adds overhead compared to simple text generation. For high‑throughput applications, this additional latency must be carefully evaluated.

Addressing these challenges often involves combining PAL with other techniques. For instance, a verifier module can check the output against expected formats or plausible ranges. If the result is nonsensical, the system can fall back to a different strategy or re‑prompt the model with more explicit instructions.

PAL in the Ecosystem of Advanced Prompting Techniques

Program-Aided Language Models do not exist in isolation. They are part of a broader movement toward augmenting LLMs with external tools and structured reasoning. Closely related approaches include:

- Tool‑Augmented Language Models (TALM): Models like Gorilla or ChatGPT’s function calling are trained to output structured JSON that invokes predefined APIs. PAL can be seen as a specialized case where the “tool” is a Python interpreter.

- ReAct (Reason + Act): This framework interleaves reasoning steps with actions. An action could be executing a piece of code, querying a search engine, or looking up a database. PAL provides the code‑execution “act” primitive.

- Code Generation and Self‑Correction: Some advanced systems combine PAL with self‑debugging. If the generated code produces an error, the error trace is fed back to the LLM, which then attempts to generate a corrected version.

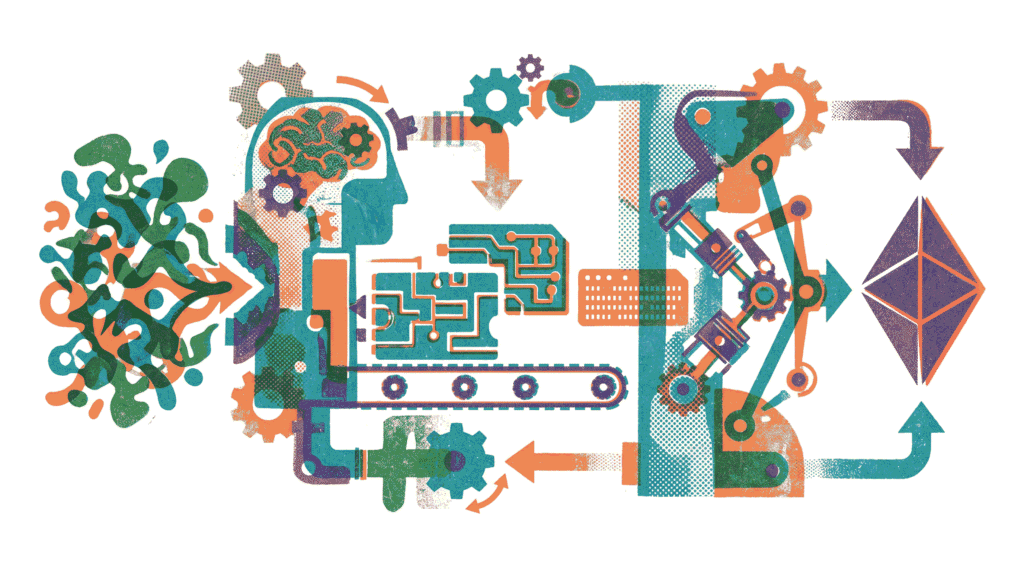

Furthermore, the principles of PAL align with the idea of neuro‑symbolic AI. The LLM acts as the neural component that understands natural language and maps it to a symbolic representation (code). The interpreter is the symbolic component that executes precise, rule‑based operations. This synergy leverages the strengths of both paradigms while mitigating their individual weaknesses.

Practical Implementation: A Simple PAL Example

To illustrate how Program-Aided Language Models work in practice, consider the following Python pseudocode for a basic PAL system:

import openai

import sys

from io import StringIO

def solve_with_pal(problem_text):

# Construct few-shot prompt with examples

prompt = f"""

Write Python code to solve this problem: {problem_text}

Assign the final answer to a variable named 'result'.

"""

response = openai.ChatCompletion.create(

model="gpt-4",

messages=[{"role": "user", "content": prompt}],

temperature=0

)

code = extract_code(response.choices[0].message.content)

# Execute in a restricted environment

exec_globals = {}

try:

exec(code, {"__builtins__": {}}, exec_globals)

return exec_globals.get("result", None)

except Exception as e:

print(f"Execution failed: {e}")

return None

This simplified example omits the critical sandboxing and error handling details, but it captures the essence. The extract_code function would parse the model’s output to isolate the Python code block. The exec call is shown with a restricted __builtins__ to prevent access to dangerous functions, though a true sandbox would use more robust isolation.

The Future of Program-Aided Language Models

As language models continue to improve in both code generation and general reasoning, the line between natural language and programming will blur further. We can anticipate several trends for Program-Aided Language Models:

- Tighter Integration with Development Environments: IDEs and notebooks will natively support PAL‑like workflows, allowing developers to describe a task in plain English and have the system propose executable code.

- Multimodal PAL: Extending the concept to other modalities, such as generating SQL for database queries, spreadsheet formulas, or even robot control scripts.

- Self‑Improving Systems: By logging successful code executions and their corresponding problems, systems can build a dataset to fine‑tune models specifically for PAL tasks, reducing error rates over time.

- Autonomous Agents: PAL will be a core component of autonomous agents that need to perform precise calculations, manipulate data, or interact with APIs as part of a larger goal. Our guide on Autonomous Goal Decomposition explores how agents break down complex tasks, a process that often benefits from code‑assisted execution.

Conclusion: Code as a Reasoning Prosthetic

In summary, Program-Aided Language Models represent a significant leap forward in the quest for reliable AI reasoning. By offloading numerical and algorithmic computation to a deterministic interpreter, PAL overcomes a fundamental limitation of current LLM architectures. It combines the flexible understanding of natural language with the precision of code execution. While challenges related to security, error handling, and latency remain, the core principle is sound and already delivering state‑of‑the‑art results on complex reasoning benchmarks. As the ecosystem of tool‑augmented LLMs matures, PAL will undoubtedly become a standard technique in the prompt engineer’s toolkit, enabling a new generation of AI applications that are both intelligent and accurate.

Further Reading: Deepen your understanding of prompt engineering with our articles on Chain‑of‑Thought Prompting, Tree‑of‑Thought Framework, and Autonomous Goal Decomposition. For the original research paper, see PAL: Program-Aided Language Models (arXiv).