ReAct prompting is a simple but powerful way to build better agents. It combines reasoning and action in one loop, so the model can think, act, observe, and then think again. In other words, the model does not just answer from memory. Instead, it can reason through a problem and use tools when needed. The original ReAct paper describes this as generating reasoning traces and task-specific actions in an interleaved manner, which improves interpretability and can reduce hallucination by grounding the model in external information.

What this article covers

- What ReAct prompting means.

- How the think-act-observe loop works.

- How to design strong ReAct prompts.

Why it matters

- It helps agents use tools intelligently.

- It makes reasoning easier to inspect.

- It supports better task completion.

Related reading

What Is ReAct Prompting?

ReAct prompting stands for reasoning and acting. It is a prompting style where the model alternates between internal reasoning and external action. The idea is very simple: first the model thinks about the task, then it takes an action such as searching, querying, or calling a tool, and then it looks at the result before deciding what to do next. The ReAct paper defines this as using reasoning traces and task-specific actions in an interleaved manner.

This matters because many language models are good at talking, but not always good at doing. A plain answer may sound confident even when it is wrong. ReAct prompting changes that by letting the agent gather fresh information from the environment. As a result, the model can correct itself step by step instead of guessing too early.

- Reasoning: the model plans and explains its next move.

- Action: the model interacts with a tool or environment.

- Observation: the model reads the result of the action.

- Iteration: the model repeats the cycle until the task is done.

If you already know Chain-of-Thought Prompting, ReAct will feel familiar at first. However, it adds a crucial extra piece: action. That extra piece is what makes ReAct especially useful for agents that need more than text generation.

Why ReAct Prompting Became Important

Traditional prompting often asks the model to answer immediately. That can work for straightforward questions. Yet, it becomes weak when the task requires current information, multi-step planning, or external verification. ReAct prompting solves this by making the model behave more like a careful worker than a fast guesser.

The original ReAct paper reports that this approach improved interpretability and trustworthiness over methods without reasoning or acting components. It also showed that, on tasks like HotpotQA and FEVER, ReAct helped reduce hallucination and error propagation by interacting with a Wikipedia API. On ALFWorld and WebShop, the paper reports stronger performance than imitation and reinforcement learning baselines.

| Approach | Main strength | Main weakness |

|---|---|---|

| Direct answer | Fast and simple | Can guess without checking |

| Chain-of-thought | Better step-by-step reasoning | Still stays inside the model |

| ReAct prompting | Reasons and acts with feedback | Can be prompt-sensitive |

That balance is the real reason ReAct prompting became popular. It gives the model a way to pause, check, and continue. Therefore, the agent is less likely to rush into the wrong answer when a tool can provide better evidence.

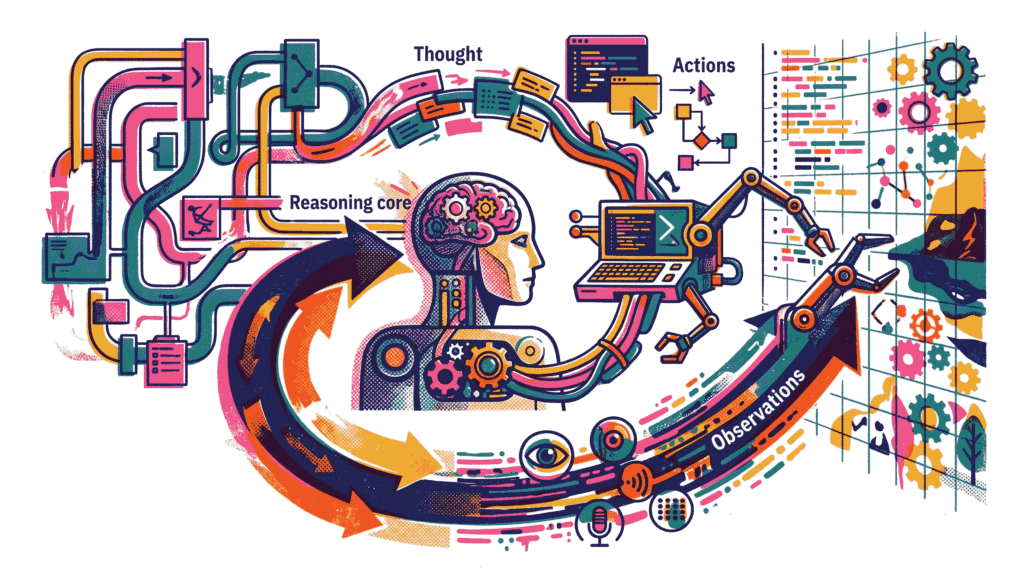

The Core Loop: Thought, Action, Observation

The heart of ReAct prompting is a repeating loop. The model writes a thought, chooses an action, reads the observation, and then updates its plan. This loop continues until the task is solved or the model decides that no more information is needed.

The loop is powerful because each step depends on real feedback. If the action returns a useful result, the model can move forward. If the result is incomplete, the model can try another action. Because of this, ReAct prompting works especially well in settings where the answer is not already sitting inside the model’s memory.

- Thought: the model plans what it should do.

- Action: the model calls a tool or query.

- Observation: the tool result comes back.

- Update: the model revises its plan using the result.

This simple structure is easy for humans to follow. That is important, because agent traces are often easier to debug when the model’s thinking is visible in a clean sequence.

What Makes ReAct Prompting Different from Chain-of-Thought?

Chain-of-Thought prompting helps the model reason step by step. ReAct prompting does that too, but it goes one step further by turning the reasoning into action. That difference matters a lot. A pure chain-of-thought prompt can explain a solution, yet it cannot ask the world for new information. ReAct can.

In practice, that means ReAct is better for tasks that involve search, tools, APIs, databases, or live environments. Meanwhile, chain-of-thought is often enough for purely internal reasoning tasks. Put simply, chain-of-thought thinks; ReAct thinks and does.

- Chain-of-thought: best for reasoning only.

- ReAct: best for reasoning plus interaction.

- Main benefit: less guessing, more grounding.

- Main tradeoff: more moving parts to manage.

This is why ReAct prompting often appears in agent systems rather than plain chat systems. It is built for situations where the model should not just produce an answer, but should also verify and refine it.

Why Actions Improve Reasoning

Actions improve reasoning because they let the model test its assumptions. If the model is unsure about a fact, it can search. If it needs a list of items, it can query. If it needs a calculation, it can use a tool. That makes the answer less dependent on hidden memory and more dependent on evidence.

The original ReAct paper says the reasoning traces help the model induce, track, and update action plans, while actions allow the model to interface with external sources such as knowledge bases or environments. In addition, the paper reports improved human interpretability and trustworthiness over methods without reasoning or acting components.

That is especially valuable in multi-hop questions. One step may reveal a clue, and the next step may use that clue to find the next clue. ReAct prompting works well when each step depends on the previous one.

ReAct Prompt Structure

A ReAct prompt usually contains a few examples of task-solving trajectories. The examples show how to think, what action to take, and how to interpret the observation. The ReAct project site describes these prompts as few-shot trajectories with human-written reasoning traces, actions, and environment observations. It also notes that the format is intuitive and flexible to design.

Question: What city hosted the event? Thought: I should check the event page. Action: Search[event page] Observation: The page mentions Paris. Thought: The city is likely Paris, but I should verify it. Action: Search[official source] Observation: The official source confirms Paris. Final Answer: Paris

The exact formatting can vary, but the pattern is the same. A thought leads to an action. The action leads to an observation. The observation leads to a better thought. That is why ReAct prompting is easy to understand once you see one clean example.

- Few-shot examples: show the model what good behavior looks like.

- Reasoning traces: make the process visible.

- Tool actions: connect the model to the outside world.

- Observations: feed the result back into the loop.

Because the prompt is so structured, it becomes easier to control the agent’s behavior. However, that structure also means the prompt designer must be thoughtful. A weak example can lead the model in the wrong direction.

Where ReAct Prompting Works Best

ReAct prompting is especially useful when the agent must interact with the world. That includes question answering with external sources, task-oriented dialogue, planning in environments, and browser-like workflows. The original paper demonstrates this on HotpotQA, FEVER, ALFWorld, and WebShop.

| Use case | Why ReAct helps | Typical tool |

|---|---|---|

| Question answering | Checks facts before answering | Search or knowledge base |

| Fact verification | Looks for evidence step by step | Wikipedia or other sources |

| Task-oriented dialogue | Keeps track of goals and slots | APIs or structured data tools |

| Interactive planning | Adapts plans after each step | Environment or simulator |

For planning-heavy workflows, ReAct can also connect naturally with Autonomous Goal Decomposition. Both ideas help break a big task into smaller steps, and then execute those steps in a controlled way.

A Simple Example of ReAct Prompting

Imagine a user asks, “Who wrote the book that inspired the movie The Wizard of Oz?” A direct answer might rush. ReAct prompting is better because it encourages the model to think through the chain: identify the book, check the author, and then answer. If needed, it can use a search tool to verify the information.

- Thought: I need to identify the original book first.

- Action: Search for the book tied to the movie.

- Observation: The movie is based on The Wonderful Wizard of Oz.

- Thought: Now I need the author of that book.

- Action: Search the author name.

- Observation: The book was written by L. Frank Baum.

- Final answer: L. Frank Baum.

This style looks simple, but it is powerful. The model does not try to jump from the question to the final answer in one leap. Instead, it takes smaller steps and checks each one. That reduces the chance of a wrong leap.

ReAct Prompting and Agent Design

ReAct prompting is not just a prompt format. It is also an agent design pattern. A ReAct-style agent usually has a controller, a reasoning step, a tool-selection step, and an observation step. Together, these pieces create a loop that can continue until the task is done.

In broader agent systems, this pattern often pairs well with Multi-Agent Systems. One agent can reason, another can search, and another can verify. ReAct provides the basic action loop that makes that cooperation possible.

- Controller: decides the next step.

- Reasoner: forms the internal plan.

- Tool layer: performs the action.

- Observer: reads the result and updates the plan.

This structure is one reason ReAct is useful in modern agent workflows. It gives the model a disciplined way to move from uncertainty to action, and then back to reasoning again.

Strengths of ReAct Prompting

ReAct prompting has several strengths. First, it is more grounded than pure text generation because the model can inspect the environment. Second, it is easier to debug because the thought-action trail is visible. Third, it can be more trustworthy when the model needs fresh evidence.

- Grounding: the model can verify facts externally.

- Interpretability: humans can inspect the trajectory.

- Flexibility: it works with many kinds of tools.

- Correction: the model can update its plan after each observation.

The original paper highlights these benefits clearly. It reports improved human-like task-solving trajectories and stronger performance on several benchmarks, especially in environments where the model has to interact with external information.

Limitations and Brittleness

ReAct prompting is useful, but it is not perfect. Later research shows that prompt design can have a strong effect on performance. In one study, the authors found that the benefits were minimally influenced by the interleaving itself and more influenced by exemplar-query similarity and prompt design choices. They describe ReAct as brittle under small perturbations to the prompt.

That means ReAct prompting should be designed carefully. A good example can help a lot. A weak example can hurt performance. Therefore, the method is useful, but it depends on disciplined prompt engineering.

- Prompt sensitivity: performance can change with small edits.

- Example dependence: the quality of in-context examples matters.

- Not always enough: some tasks may need better planning or memory.

- Human burden: designers may need task-specific examples.

This is where careful engineering matters. If the agent must work in a stable production setting, you may need more than a single prompt pattern. A combination of ReAct prompting, evaluation, and guardrails is often stronger than ReAct alone.

ReAct Prompting and Human-in-the-Loop Control

Because ReAct traces are inspectable, they fit well with human review. A human can look at the reasoning, check the action, and step in if the plan looks weak. That makes the approach helpful for systems that need oversight, safety, or approval.

For that reason, ReAct prompting pairs naturally with Human-in-the-loop Governance. The model can propose, but a human can still validate. That balance is especially useful in sensitive domains where trust matters more than speed alone.

ReAct Prompting and Agentic RAG

ReAct prompting also works well with retrieval-augmented systems. If an agent must search documents, inspect sources, and refine its answer, the reasoning-action loop becomes very valuable. It allows the agent to retrieve, evaluate, and continue instead of retrieving once and hoping for the best.

That is why ReAct fits naturally with Agentic RAG. Retrieval brings in evidence. ReAct helps the model decide what to do with that evidence. Together, they create a self-correcting information loop.

A Practical Prompting Workflow

If you want to design a strong ReAct prompt, start with a clear task and a few good examples. Then define the actions the model can take. After that, specify how observations should be handled. Finally, test the prompt on tasks that are similar and tasks that are slightly different.

- Define the goal: say exactly what the agent should solve.

- List allowed actions: search, lookup, query, calculate, or inspect.

- Write good examples: show the thought-action-observation style.

- Check the output: make sure the agent stays on task.

- Tune the prompt: improve examples if the agent drifts.

- Review failures: learn from where the loop breaks.

This process sounds simple, yet it saves a lot of time later. Because ReAct prompting is sensitive to examples, testing the prompt early is much better than discovering weak behavior after deployment.

A Quick Comparison With Other Agent Ideas

ReAct prompting is one way to build agents, but it is not the only one. Some workflows use direct tool calling. Others use planning layers. Others use multiple specialized agents. ReAct sits in the middle as a flexible pattern for step-by-step grounded reasoning.

If you are exploring broader agent architectures, Multi-Agent Systems and Program-Aided Language Models are useful comparisons. ReAct focuses on the loop between thought and action. PAL focuses more on solving through code. Multi-agent systems focus on delegation and collaboration.

| Pattern | Best for | Main idea |

|---|---|---|

| ReAct prompting | Reasoning + tool use | Think, act, observe, repeat |

| PAL | Code-based problem solving | Use code as the reasoning vehicle |

| Multi-agent systems | Collaborative workflows | Split work across agents |

Best Practices for ReAct Prompting

Good ReAct prompts are clear, constrained, and easy to inspect. The more uncertain the environment, the more valuable the loop becomes. However, the prompt still needs discipline.

- Use simple actions: keep the tool set narrow at first.

- Write realistic examples: match the actual task domain.

- Keep thoughts concise: short plans are easier to follow.

- Verify observations: do not trust a single tool blindly.

- Stop when solved: avoid unnecessary loops.

- Review failures: fix weak examples and confusing actions.

The later critique of ReAct prompting is useful here. It suggests that example quality and prompt similarity can matter more than the interleaving itself. So, strong examples are not optional; they are part of the method.

Common Mistakes to Avoid

Even good teams make avoidable mistakes with ReAct prompting. The most common issue is writing examples that are too vague. Another issue is giving too many actions, which makes the agent wander. A third issue is failing to check whether the prompt still works on new tasks.

- Too much freedom: the model may choose bad actions.

- Too many steps: the loop can become slow or noisy.

- Poor examples: weak examples teach weak behavior.

- No validation: the prompt may work in one case and fail in another.

- Ignoring safety: actions should stay within approved boundaries.

The safest habit is to treat ReAct prompting like a small system, not a magic phrase. Design it carefully. Test it carefully. Then, revise it when the environment changes.

Conclusion

ReAct prompting is a practical way to combine reasoning and action inside an agent. The model thinks through a task, takes an action, observes the result, and then updates its plan. The original ReAct paper shows that this can improve interpretability and task performance, especially when the task needs external information. At the same time, later research shows that the method can be brittle if the prompt is not designed well.

That balance is the key lesson. ReAct prompting is not just about telling a model to think aloud. It is about building a grounded loop where reasoning guides action and action improves reasoning. When the prompt is clean and the tools are useful, the result can be very effective.

To go deeper, explore Chain-of-Thought Prompting, Autonomous Goal Decomposition, and Agentic RAG. Together, they show how reasoning, planning, and tool use can work as one system.

Further reading: Review the official ReAct project site, the original ReAct paper, and the later critique On the Brittle Foundations of ReAct Prompting.