The Magic of Averages: Why Statistics Works

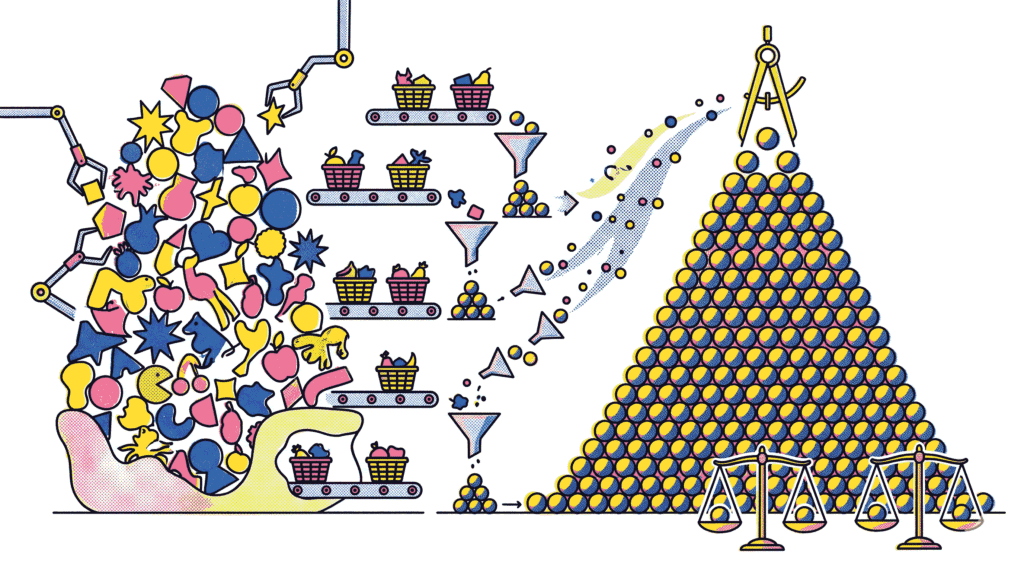

Have you ever wondered how pollsters can predict election outcomes by surveying only a few thousand people? Or how quality control engineers can assess a massive production batch by testing just a handful of items? The answer lies in one of the most profound and elegant results in all of mathematics: the Central Limit Theorem (CLT). This theorem provides the theoretical foundation that makes statistical inference possible. In essence, it guarantees that under certain conditions, the distribution of sample means will always approximate a normal distribution, regardless of the shape of the original population. Consequently, the Central Limit Theorem unlocks the ability to make reliable predictions about populations using only sample data. In this comprehensive guide, we will unpack exactly how the CLT works, explore its critical assumptions, and demonstrate why it is indispensable for everything from A/B testing to clinical trials.

What Is the Central Limit Theorem?

The Central Limit Theorem states that if you take sufficiently large random samples from any population—regardless of whether that population is normally distributed, skewed, or even bimodal—the distribution of the sample means will approximate a normal (Gaussian) distribution. Furthermore, the mean of this sampling distribution will be approximately equal to the population mean, and its standard deviation (called the standard error) will be the population standard deviation divided by the square root of the sample size.

To illustrate, imagine a highly skewed population: the annual income of residents in a major city. Most people earn moderate incomes, but a small fraction earns millions, creating a long right tail. If you were to take a single random sample of 1,000 residents and calculate the average income, that single value tells you little about the distribution of the statistic itself. However, if you were to repeat this sampling process thousands of times—each time drawing a new sample of 1,000 and recording the mean—the histogram of those thousands of sample means would form a beautiful, symmetric bell curve. This remarkable phenomenon is the essence of the Central Limit Theorem.

This principle is what allows statisticians to use the normal distribution as a universal approximation for sampling distributions. It does not matter if the underlying data looks nothing like a bell curve; the Central Limit Theorem assures us that the means of those samples will behave predictably. For a deeper understanding of how statistical inference relies on such properties, you might find our article on Understanding P-Values and Type I/II Errors helpful.

The Three Pillars of the Central Limit Theorem

To fully grasp the Central Limit Theorem, it is essential to understand its three key components. These pillars define the behavior of the sampling distribution.

- Independence: The samples must be drawn independently from the population. This means the selection of one observation should not influence the selection of another. In practice, simple random sampling usually satisfies this condition.

- Sample Size (n): The theorem holds asymptotically, meaning the approximation to normality improves as the sample size increases. A common rule of thumb is that a sample size of n ≥ 30 is sufficient for the CLT to apply, provided the population distribution is not extremely skewed.

- Finite Variance: The population from which samples are drawn must have a finite mean and a finite variance. This excludes certain pathological distributions, such as the Cauchy distribution, which has undefined variance and for which the CLT does not hold.

When these conditions are met, the Central Limit Theorem provides a powerful shortcut. Instead of deriving a unique sampling distribution for every possible population shape, we can rely on the familiar properties of the normal curve. This is why you see z‑tables and t‑tables used so pervasively across diverse scientific disciplines.

Why Sample Size Matters: The Role of ‘n’

The sample size, denoted as ‘n’, plays a starring role in the Central Limit Theorem. Specifically, the spread of the sampling distribution—quantified by the standard error—shrinks as the sample size grows. Mathematically, the standard error of the mean is σ / √n, where σ is the population standard deviation. This inverse square root relationship has profound implications.

For example, to cut the margin of error in half, you do not need to double the sample size; you need to quadruple it. This explains why polling organizations face diminishing returns. A survey of 400 people might yield a margin of error of ±5%, but achieving ±2.5% would require surveying roughly 1,600 people. Moreover, the rate at which the sampling distribution converges to normality depends on the shape of the original population. If the original population is symmetric and unimodal, convergence is rapid—often with n as small as 15. On the other hand, a heavily skewed population might require n of 50 or more before the distribution of sample means appears sufficiently bell‑shaped.

Practical Applications of the Central Limit Theorem

The Central Limit Theorem is far more than a theoretical curiosity; it is the engine that powers countless real‑world applications. Below are some of the most important ways it is used.

1. Constructing Confidence Intervals

Because the CLT tells us that sample means follow an approximately normal distribution, we can construct confidence intervals around our estimates. For instance, a 95% confidence interval for a population mean is calculated as: sample mean ± 1.96 × (standard error). This interval provides a range of plausible values for the true population parameter. Without the Central Limit Theorem, calculating such intervals would require knowing the exact shape of the population distribution, which is rarely feasible.

2. Hypothesis Testing and P-Values

Most classical hypothesis tests, including the t‑test and z‑test, rely directly on the CLT. These tests compare an observed sample statistic to a theoretical sampling distribution assumed to be normal. The p‑value—the probability of observing a result as extreme as the one obtained under the null hypothesis—is calculated based on this normal approximation. As we explored in our guide on Understanding P-Values and Type I/II Errors, the validity of these calculations hinges on the CLT’s assurance that the sampling distribution is normal.

3. Quality Control and Process Monitoring

In manufacturing, control charts are used to monitor whether a process is stable over time. These charts plot sample means and rely on the CLT to set upper and lower control limits. If a sample mean falls outside these limits, it signals that the process may have shifted, prompting investigation. This application of the Central Limit Theorem helps ensure consistent product quality without inspecting every single item.

4. Machine Learning Model Evaluation

Even in modern machine learning, the CLT makes an appearance. When using cross‑validation, performance metrics like accuracy are averaged over multiple folds. The distribution of these fold‑level accuracies tends toward normality, allowing practitioners to compute confidence intervals around a model’s expected performance. This provides a more nuanced understanding than reporting a single point estimate.

Common Misconceptions About the Central Limit Theorem

Despite its elegance, the Central Limit Theorem is frequently misunderstood. Clarifying these misconceptions is essential for proper application.

- Misconception: The CLT says that the data itself becomes normally distributed as sample size increases.

Reality: The CLT applies to the distribution of the sample mean, not the raw data. If the population is skewed, the sample data will still reflect that skew, regardless of sample size. - Misconception: The CLT requires a sample size of exactly 30.

Reality: The number 30 is merely a heuristic guideline. The required sample size depends on the degree of skewness in the population. Highly skewed populations require larger samples for the normal approximation to be accurate. - Misconception: The CLT guarantees that the sample mean will equal the population mean.

Reality: The CLT states that the expected value of the sample mean is the population mean. Any individual sample mean will likely differ from the population mean due to sampling variability.

Understanding these nuances prevents the misapplication of statistical methods and ensures that conclusions drawn from data are valid. For example, assuming that a small sample of skewed data is normal simply because of the CLT can lead to incorrect confidence intervals and flawed decisions. For another perspective on how aggregated views can mislead, see our discussion of Simpson’s Paradox in AI Training Data.

The Central Limit Theorem in Action: A Simulation

One of the best ways to internalize the Central Limit Theorem is through simulation. Consider rolling a fair, six‑sided die. The population distribution is discrete and uniform: each outcome from 1 to 6 has a probability of 1/6. This is decidedly not a normal distribution. Now, imagine rolling the die twice and calculating the average. If you repeat this process thousands of times, the distribution of those averages will begin to look triangular. Roll the die five times and average the results, and the distribution starts resembling a bell curve. By the time you average 30 rolls, the sampling distribution is strikingly normal.

This phenomenon occurs even though the underlying process—rolling a die—has no resemblance to a normal distribution. The same principle applies to real‑world data: customer purchase amounts, call center wait times, or even the heights of individuals. Regardless of the original shape, the Central Limit Theorem ensures that means of sufficiently large samples will be normally distributed.

When the Central Limit Theorem Fails

While the Central Limit Theorem is remarkably robust, it is not without limitations. The theorem assumes that the population variance is finite. There exist distributions—most notably the Cauchy distribution—that have undefined variance. In such cases, the sample mean does not converge to a normal distribution. Instead, the distribution of the sample mean remains Cauchy‑like, regardless of sample size. This means that extreme outliers dominate the behavior of the sample mean, rendering traditional statistical inference invalid.

Additionally, the CLT is an asymptotic result; it describes behavior as the sample size approaches infinity. In finite samples, the approximation may be poor, especially in the tails of the distribution. For this reason, when dealing with small samples or when the population is known to be normal, methods based on the t‑distribution (which has heavier tails) are often preferred over the normal approximation.

Why the Central Limit Theorem Matters for Sampling

At its heart, the Central Limit Theorem provides the justification for using sample statistics to estimate population parameters. Without the CLT, every sampling distribution would need to be derived from scratch for each unique population. Polling, medical research, industrial quality control, and economic forecasting would be vastly more complex and uncertain. The Central Limit Theorem gives us a universal language—the normal distribution—to quantify uncertainty and make probabilistic statements about the unknown.

Moreover, the CLT underpins the entire framework of frequentist statistics. It allows us to construct confidence intervals, perform hypothesis tests, and determine required sample sizes. In a world increasingly driven by data, a solid grasp of the Central Limit Theorem is not optional; it is foundational literacy for anyone who consumes or produces statistical information.

Conclusion: The Statistical Bedrock

In summary, the Central Limit Theorem is a cornerstone of modern statistics. It reveals that the humble sample mean, when drawn repeatedly, tends toward a predictable and beautifully symmetric normal distribution. This insight unlocks the ability to infer the characteristics of an entire population from a relatively small sample. By understanding its pillars—independence, sufficient sample size, and finite variance—and avoiding common misconceptions, you can harness the power of the CLT in your own analyses. Whether you are evaluating an A/B test, interpreting a clinical trial, or simply trying to make sense of poll numbers, the Central Limit Theorem is silently working behind the scenes, providing the statistical bedrock upon which reliable inference is built.

Further Reading: Strengthen your statistical foundation with our guides on Understanding P-Values and Type I/II Errors and Bayesian vs Frequentist Statistics. For interactive simulations, visit the excellent resource at Seeing Theory (Brown University).