Breaking the Single‑Server Barrier

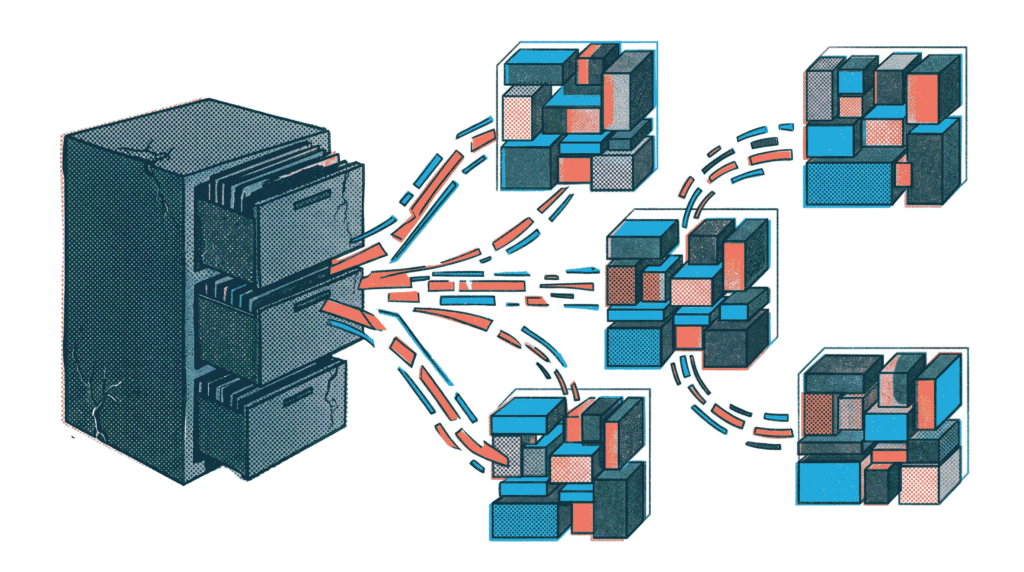

Every successful application eventually faces the same daunting challenge: the database server becomes a bottleneck. Queries slow down, write operations queue up, and storage limits loom on the horizon. The solution lies not in buying an infinitely larger machine, but in distributing the load across multiple servers. This is where database sharding and partitioning come into play. Partitioning involves splitting a large table into smaller, more manageable pieces within the same database server. Sharding, on the other hand, takes this concept further by distributing those pieces across completely separate physical nodes. Together, these strategies form the backbone of modern, high‑traffic systems like those powering social media platforms, e‑commerce giants, and global financial services. In this comprehensive guide, we will demystify database sharding and partitioning, compare their approaches, and explore how to implement them effectively.

What Is Database Partitioning?

Before diving into the distributed world of sharding, it’s crucial to understand partitioning. Database partitioning is the process of dividing a single, logical database table into multiple smaller physical segments, all residing within the same database server. The application typically continues to interact with the table using its original name, while the database engine transparently routes queries to the appropriate underlying partitions. This approach is a core feature of many mature relational database systems, including PostgreSQL, MySQL, and SQL Server.

There are several common strategies for partitioning a table, each suited to different access patterns:

- Range Partitioning: Rows are distributed based on a continuous range of values, such as dates. For example, all orders from January 2025 go into one partition, February into another, and so on. This is ideal for time‑series data where you frequently query recent periods and can easily archive or drop old partitions.

- List Partitioning: Rows are assigned to partitions based on a discrete list of values. A common example is partitioning a customers table by country_code. All customers from ‘US’ go to one partition, ‘CA’ to another, and ‘UK’ to a third.

- Hash Partitioning: A hash function is applied to a specified column (like customer_id), and the resulting hash value determines the partition. This strategy aims to distribute data uniformly when there is no natural range or list key.

The primary benefits of partitioning are improved query performance (via partition pruning) and simplified maintenance. A query that filters on the partition key only scans the relevant partitions, drastically reducing I/O. Furthermore, dropping an entire old partition is instantaneous, whereas deleting millions of individual rows is slow and resource‑intensive.

What Is Database Sharding?

While partitioning keeps data on a single server, database sharding is a horizontal scaling strategy that distributes data across multiple independent database servers, often called shards. Each shard holds a subset of the total data, and no single shard contains the entire dataset. Crucially, shards typically do not share any underlying infrastructure—they are separate processes running on distinct physical or virtual machines. Therefore, database sharding and partitioning are complementary: you can partition tables within each shard to further optimize local performance.

Sharding is the go‑to solution when a single database server can no longer handle the write volume or total data size required by the application. By spreading the load across multiple machines, you effectively multiply your capacity. However, this power comes with significant added complexity. The application, or a specialized routing layer, must know exactly which shard contains the data for a given request. This is determined by a shard key—a column whose value dictates data placement. For a social media app, user_id is a natural shard key because most queries are scoped to a single user’s activity.

Choosing the right shard key is perhaps the single most important decision in a sharded architecture. An ideal shard key possesses the following characteristics:

- High Cardinality: It should have many distinct values to allow data to be spread evenly across all shards. A key with only three possible values (e.g., subscription_tier) limits you to three shards and creates massive hot spots.

- Query Isolation: The majority of application queries should be answerable by looking at a single shard. If every query requires scattering to all shards, you lose most of the performance benefits.

- Write Distribution: The key should naturally distribute writes to avoid overwhelming a single shard with a disproportionate number of inserts or updates.

Database Sharding Strategies: A Comparative Look

Just as with partitioning, there are multiple ways to map a shard key to a specific database server. The choice of strategy has profound implications for scalability and operational overhead.

1. Key‑Based or Hash‑Based Sharding

In this approach, a hash function is applied to the shard key. The output hash determines which shard receives the data. This method is excellent for ensuring uniform data distribution. However, it complicates the process of adding new shards. When you increase the number of shards, the hash function’s output range changes, forcing a large‑scale redistribution of existing data—a process known as resharding. To mitigate this, many systems use consistent hashing, which minimizes the amount of data that needs to move when the shard count changes.

2. Range‑Based Sharding

Data is assigned to shards based on ranges of the shard key. For instance, users with IDs 1–1,000,000 go to Shard A, 1,000,001–2,000,000 go to Shard B, and so forth. This model is simpler to reason about and makes range scans efficient. On the downside, it is highly susceptible to creating hot spots. If newer users tend to be more active, the shard holding the highest range of IDs will receive a disproportionate share of the write traffic, negating the benefits of distribution.

3. Directory‑Based Sharding

This strategy employs a central lookup service or directory that maintains a mapping between shard keys and the specific database server. When the application needs data, it first consults the directory to locate the correct shard. Directory‑based sharding offers the ultimate flexibility—you can add shards, move data manually, or implement custom placement logic without complex resharding algorithms. Nevertheless, the directory itself becomes a critical piece of infrastructure. It must be highly available and performant; otherwise, it becomes a single point of failure and a new bottleneck.

Partitioning vs. Sharding: Key Differences at a Glance

To clarify the distinction between database sharding and partitioning, the following table summarizes their core differences:

| Feature | Partitioning | Sharding |

|---|---|---|

| Data Location | Same database server | Multiple independent servers |

| Primary Goal | Improve query performance and manageability on a single node | Scale write throughput and total storage capacity horizontally |

| Complexity | Low to moderate; managed by the DB engine | High; requires application‑level routing and awareness |

| Cross‑Shard Operations | Not applicable (data is co‑located) | Difficult; joins and aggregations across shards are expensive |

The Hidden Costs and Challenges of Sharding

While database sharding unlocks near‑linear scalability, it introduces formidable operational and development challenges. Before embarking on a sharding initiative, you must carefully weigh these costs.

- Application Complexity: The application must be shard‑aware. It needs logic to determine the correct shard for each query, handle connection pooling to multiple databases, and manage transactions that span shards (which are often impossible with traditional ACID guarantees).

- Loss of Relational Power: Features like foreign key constraints and JOIN operations that span multiple shards become extremely difficult or impossible to enforce at the database level. This burden shifts to the application code.

- Resharding: As your data continues to grow, you will inevitably need to add more shards. Moving large volumes of production data between shards with zero downtime is a major engineering undertaking.

- Analytics and Reporting: Business intelligence queries that aggregate data across all shards become complex. You typically need a separate data pipeline to export data from all shards into a central data warehouse (like Snowflake or BigQuery) for analysis.

- Operational Overhead: Managing a fleet of dozens or hundreds of database servers requires robust automation for backups, monitoring, schema migrations, and failover.

Given these challenges, database sharding should be considered a last resort after exhausting other scaling options. Before sharding, ensure you have optimized queries, implemented effective caching (e.g., Redis), and considered vertical scaling (upgrading to a more powerful server) or using read replicas to offload read‑heavy traffic.

Real‑World Use Cases for Database Sharding and Partitioning

Understanding where database sharding and partitioning shine in practice helps ground these concepts.

- Social Media Platforms: Platforms like Instagram or X (formerly Twitter) store user profiles, posts, and direct messages. They typically shard by user_id. This ensures that all data related to a single user resides on a single shard, making profile pages and timelines efficient to render.

- SaaS Applications (Multi‑tenancy): Many B2B SaaS products shard by tenant_id (or organization ID). This provides strong data isolation between customers and can simplify compliance and backup strategies.

- E‑commerce Order Management: An online retailer might partition its orders table by month (range partitioning) to keep recent orders fast and archive old data efficiently. They might further shard the entire database by customer_region to handle global scale.

- Time‑Series Data (IoT and Monitoring): Systems ingesting massive streams of sensor data or logs heavily rely on time‑based range partitioning. Combined with sharding, they can scale to handle petabytes of data across a cluster of nodes.

Modern Alternatives and Managed Solutions

The operational burden of self‑managed database sharding has led to the rise of distributed SQL databases that handle sharding transparently. Solutions like CockroachDB, YugabyteDB, and cloud offerings like Amazon Aurora Limitless Database or Google Cloud Spanner provide automatic sharding and replication. These systems present a familiar SQL interface while distributing data across nodes behind the scenes. They handle resharding, failover, and cross‑shard transactions automatically. For many organizations, adopting a purpose‑built distributed database is more cost‑effective than building and maintaining a custom sharding layer on top of a traditional RDBMS.

Moreover, the principles of sharding share conceptual ground with other distributed architectures. For instance, our guide on Serverless Architectures explores a different facet of scaling without managing infrastructure, albeit at the application layer rather than the data layer. Both approaches aim to eliminate single points of failure and enable elastic growth.

Best Practices for Implementing Database Sharding and Partitioning

If you determine that database sharding and partitioning are the right path for your application, adhere to the following best practices to minimize future pain:

- Start with Partitioning First: Exhaust the benefits of partitioning on a single large server before introducing the complexity of sharding.

- Choose a Future‑Proof Shard Key: The shard key is nearly impossible to change later. Invest significant time in analyzing query patterns and data distribution before finalizing it.

- Design for Resharding from Day One: Even if you don’t need it immediately, having a plan and the necessary tooling for moving data between shards will save you immense effort when growth inevitably demands it.

- Keep Shards Stateless Beyond the Data: Avoid storing application‑specific session state or configuration on individual shards. This makes failover and replacement of a shard much easier.

- Use a Robust Routing Layer: Whether it’s a library like Vitess for MySQL or a custom service, centralize the shard‑routing logic to keep application code clean.

- Monitor Shard Balance: Continuously monitor the data size and query load on each shard. Proactively rebalance before hot spots degrade user experience.

Conclusion: Scaling Smarter, Not Just Harder

In summary, database sharding and partitioning are essential techniques for scaling data infrastructure beyond the limits of a single machine. Partitioning provides a powerful, low‑risk way to optimize performance and manageability within a single server. Sharding, while significantly more complex, unlocks near‑linear horizontal scalability for the world’s largest applications. By understanding the trade‑offs—especially the loss of relational integrity and the operational overhead—you can make an informed decision about when and how to apply these strategies. Whether you choose to implement sharding yourself or leverage modern distributed SQL databases, the core concepts remain the same. They are the building blocks for data platforms that can grow gracefully from thousands to billions of users.

Further Reading: Expand your database expertise with our guides on ACID Properties & Transaction Management and Recursive CTEs for Hierarchical Data. For practical implementation, explore the documentation for Vitess (MySQL sharding) and CockroachDB (distributed SQL).