The Evolution from Servers to Services

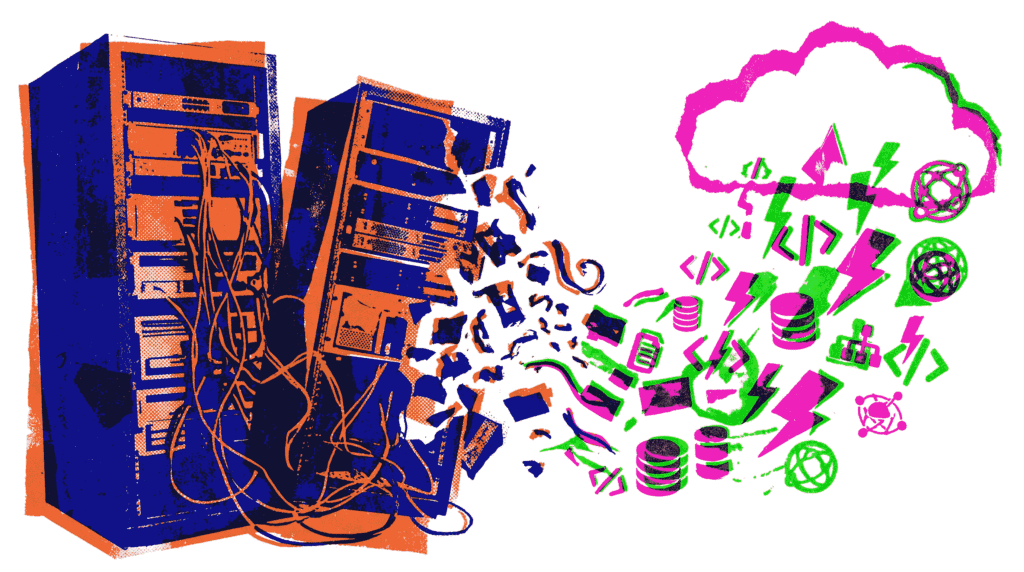

For decades, deploying an application meant provisioning servers—whether physical machines or virtual instances. This process involved capacity planning, operating system updates, and constant vigilance over uptime. Today, however, a transformative paradigm known as serverless architectures is reshaping how developers build and scale software. This model abstracts away the underlying infrastructure entirely. Consequently, developers can focus almost exclusively on writing code, while the cloud provider dynamically manages the execution environment. In this article, we will explore the core concepts of serverless architectures, examine their benefits and trade‑offs, and discuss when to adopt them.

What Are Serverless Architectures?

Despite the name, serverless architectures do not mean the absence of servers. Instead, the term signifies that the responsibility of server management is shifted entirely to the cloud provider. Developers no longer need to provision, scale, or maintain servers. A more accurate description might be “function‑as‑a‑service” (FaaS) combined with managed backend services. In this model, you upload discrete pieces of code (functions), and the platform automatically executes them in response to events or HTTP requests. Moreover, you pay only for the exact compute time your code consumes, down to the millisecond.

Major cloud providers offer robust platforms for this purpose. AWS Lambda pioneered the space, while Azure Functions and Google Cloud Functions provide similar capabilities. Additionally, Cloudflare Workers and Netlify Functions bring serverless computing to the edge. This ecosystem allows teams to assemble applications using a rich tapestry of managed services—databases like DynamoDB or Firestore, authentication with Cognito or Auth0, and API gateways—without managing any of the supporting servers.

Core Benefits of Adopting Serverless Architectures

The shift toward serverless architectures is driven by several compelling operational and financial advantages.

- Automatic and Granular Scaling: Traditional auto‑scaling reacts to metrics over minutes. In contrast, serverless functions scale instantly with each incoming request. If traffic spikes from zero to ten thousand requests per second, the platform spins up thousands of isolated instances concurrently.

- Reduced Operational Overhead: Teams are liberated from patching operating systems, updating runtime environments, or managing container fleets. This allows smaller teams to operate sophisticated, high‑traffic applications.

- Cost Efficiency (Pay‑per‑Use): You are not billed for idle server time. If a function runs for 100 milliseconds and then stops, you pay only for that fraction of a second. This is particularly advantageous for workloads with variable or sporadic traffic patterns, such as batch processing jobs or webhooks.

- Faster Time‑to‑Market: Developers can write a single function and deploy it globally in minutes. Iteration cycles shorten dramatically because infrastructure changes are handled through configuration, not manual setup.

Common Patterns in Serverless Architectures

While you can technically run any short‑lived code in a function, certain patterns have emerged as best practices for building reliable serverless architectures.

1. Event‑Driven Processing

This is the heartbeat of serverless. An event—such as a file upload to cloud storage (S3), a new record in a database stream (DynamoDB Streams), or a message in a queue (SQS)—triggers a function. The function processes the event and then terminates. As a result, complex workflows can be decomposed into a series of small, decoupled steps. For instance, a photo upload could trigger a thumbnail generator, which in turn triggers a metadata indexer.

2. RESTful APIs with API Gateway

A common entry point for serverless applications is an API Gateway. This service handles HTTP routing, authentication, rate limiting, and request validation before invoking the appropriate backend function. This setup allows for the creation of fully managed, scalable web APIs without managing a single web server process.

3. Scheduled Tasks (Cron Jobs)

Replacing traditional cron jobs running on a dedicated VM, serverless functions can be scheduled using rules (e.g., CloudWatch Events). Whether it’s generating nightly reports or cleaning up stale database entries, scheduled functions offer a reliable, low‑maintenance alternative.

4. Stream and Queue Processing

Functions excel at consuming data from streams (Kinesis, Kafka) and queues. The platform automatically manages the sharding and concurrency of consumers, ensuring that messages are processed efficiently and in order (depending on the configuration).

Challenges and Drawbacks of Going Serverless

While serverless architectures offer immense power, they are not a universal solution. Understanding the inherent limitations is crucial for avoiding production pitfalls.

- Cold Starts and Latency: If a function hasn’t been invoked recently, the cloud provider may need to initialize a new execution environment. This “cold start” can add significant latency (from hundreds of milliseconds to several seconds), which is problematic for latency‑sensitive user‑facing APIs. Provisioned concurrency can mitigate this, albeit at an extra cost.

- Vendor Lock‑In: Deep integration with cloud‑native services (e.g., AWS Step Functions or DynamoDB) ties an application tightly to a specific vendor. Migrating a complex serverless application to another cloud provider is non‑trivial and often requires significant refactoring.

- Execution Limits: Functions have strict constraints on runtime duration (e.g., 15 minutes for AWS Lambda), memory allocation, and deployment package size. Long‑running computational tasks or heavy video processing jobs are generally unsuitable for FaaS unless decomposed into smaller chunks.

- Debugging and Observability: Debugging a distributed system composed of dozens of ephemeral functions is more complex than debugging a monolithic application. Distributed tracing tools (like AWS X‑Ray or Datadog) become essential for understanding request flows and pinpointing failures.

- State Management: Functions are stateless by nature. Any persistent state must be stored externally in a database, cache (Redis/Memcached), or object store. Consequently, application design must explicitly account for this statelessness.

When Should You Use Serverless Architectures?

Given the trade‑offs, serverless architectures shine brightest in specific scenarios. They are an excellent fit for:

- High‑variance or spiky traffic workloads (e.g., e‑commerce checkout during a flash sale).

- Rapid prototyping and MVPs where infrastructure management would slow down development.

- Background processing and data transformation pipelines (ETL).

- IoT and mobile backends that require massive concurrency.

- Chatbots and voice assistants responding to webhooks.

Conversely, they may be less suitable for predictable, high‑throughput, steady‑state workloads (where reserved instances are cheaper) or applications requiring ultra‑low, sub‑millisecond latency with persistent connections (e.g., real‑time gaming servers).

The Intersection of Serverless and Modern AI

Interestingly, the principles of serverless architectures align well with the demands of modern AI inference. Deploying machine learning models often requires handling unpredictable request volumes. Serverless functions can host lightweight models (or call out to dedicated model endpoints) and scale automatically. Moreover, this paradigm pairs effectively with federated learning, where model updates from edge devices must be aggregated securely in the cloud without maintaining a persistent server fleet.

Best Practices for Robust Serverless Applications

To harness the full potential of serverless architectures while avoiding common traps, consider the following guidelines:

- Keep Functions Focused and Small: Adhere to the Single Responsibility Principle. A function should do one thing and do it well. This improves cold start times and makes testing easier.

- Use Environment Variables for Configuration: Never hardcode secrets or endpoint URLs. Leverage the platform’s native secrets manager (e.g., AWS Secrets Manager).

- Implement Idempotency: In a distributed system, functions might be invoked multiple times for the same event. Design your logic so that duplicate processing does not corrupt data or trigger unwanted side effects (e.g., sending duplicate emails).

- Embrace Asynchronous Patterns: Whenever possible, decouple producers from consumers using queues or event buses. This improves resilience and smooths out traffic bursts.

- Monitor and Set Alerts: Utilize tools like AWS CloudWatch or third‑party platforms to monitor error rates, duration, and throttling metrics. Setting up alerts on anomalies helps catch issues before users notice them.

The Future of Serverless Computing

The trajectory of serverless architectures points toward greater abstraction and reduced friction. We are already seeing the rise of “serverless containers” (e.g., AWS App Runner, Google Cloud Run), which blend the simplicity of FaaS with the flexibility of standard container runtimes. Furthermore, improvements in edge computing are pushing serverless logic closer to end‑users, drastically cutting latency for global audiences.

As the ecosystem matures, expect more sophisticated tooling for local development, debugging, and cross‑cloud portability. Initiatives like the OpenFunction project and the Serverless Framework are already smoothing over the differences between cloud providers. Ultimately, the trend is clear: developers will spend less time managing infrastructure and more time delivering value.

Conclusion

In summary, serverless architectures represent a significant evolution in cloud computing, offering unparalleled ease of scaling and a true pay‑per‑use billing model. By abstracting away servers, containers, and operating systems, they empower developers to concentrate on solving business problems. However, they also introduce new considerations around latency, vendor lock‑in, and observability. For event‑driven workloads, APIs, and batch processing jobs, the benefits often outweigh the drawbacks. By understanding the core patterns and adhering to best practices, you can leverage serverless to build resilient, cost‑effective, and scalable applications that stand the test of traffic spikes and business growth.

Further Reading: Explore more about AI and cloud techniques with our articles on Federated Learning and Multimodal Learning. For deeper technical details, visit the official documentation for AWS Lambda and the Serverless Framework.