The Challenge: Giant Models, Tiny Devices

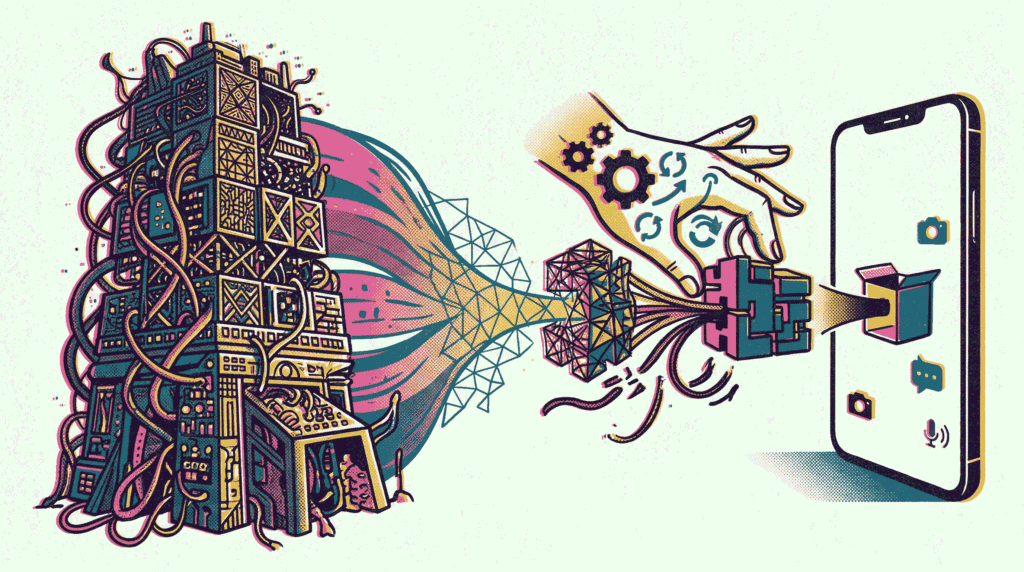

Modern AI models are astonishingly powerful but notoriously bloated. A state‑of‑the‑art language model can easily exceed hundreds of gigabytes, demanding high‑end GPUs and vast memory. Yet, the future of AI lies at the edge—on smartphones, wearables, and embedded sensors. How do we bridge this gap? The answer lies in two complementary techniques: model quantization and distillation. Quantization reduces the numerical precision of a model’s parameters, slashing its memory footprint and accelerating inference. Distillation, on the other hand, trains a compact “student” model to mimic a larger “teacher” model. Together, these methods enable sophisticated AI to run efficiently on resource‑constrained mobile devices. In this article, we will explore how model quantization and distillation work, compare their trade‑offs, and provide practical guidance for mobile deployment.

Understanding Model Quantization

At its core, model quantization is the process of mapping continuous high‑precision values (typically 32‑bit floating‑point numbers, or FP32) to a smaller set of discrete low‑precision values (such as 8‑bit integers, or INT8). Think of it as rounding a detailed decimal number like 3.1415926535 to a simpler 3.14. While some precision is lost, the storage and computational savings are immense. Consequently, quantized models occupy a fraction of the original disk space and execute much faster on CPUs and specialized hardware like NPUs (Neural Processing Units).

There are two primary approaches to quantization. Post‑Training Quantization (PTQ) takes a fully trained FP32 model and converts its weights (and optionally activations) to lower precision. This requires no retraining and is remarkably simple to apply. However, it can sometimes cause a noticeable drop in accuracy, especially for smaller or highly sensitive models. Alternatively, Quantization‑Aware Training (QAT) simulates the effects of quantization during the training process. The model learns to compensate for the reduced precision, resulting in a quantized model that maintains accuracy much closer to the original. Although QAT requires more effort, it is the gold standard for aggressive quantization targets like INT4 or lower.

For mobile deployment, model quantization is non‑negotiable. Frameworks like TensorFlow Lite and PyTorch Mobile provide built‑in tools to quantize models to INT8, often reducing size by 4x and latency by 2‑3x with minimal accuracy loss. This is why you can run object detection or speech recognition entirely offline on your smartphone.

Understanding Model Distillation

While quantization compresses the numerical representation, model distillation compresses the architecture itself. Introduced by Geoffrey Hinton in 2015, knowledge distillation involves training a smaller “student” network to replicate the behavior of a larger, pre‑trained “teacher” network. Instead of training the student solely on hard labels (e.g., “cat” or “dog”), it also learns from the teacher’s soft labels—the probability distribution over all classes. These soft labels contain rich information about class similarities (e.g., a cat is more similar to a dog than to a car), which helps the student generalize better with fewer parameters.

A classic example is DistilBERT, a distilled version of the massive BERT language model. DistilBERT retains 97% of BERT’s language understanding capabilities while being 40% smaller and 60% faster. Similarly, in computer vision, a lightweight MobileNet can be trained via distillation from a heavy ResNet or EfficientNet teacher. The key advantage is that distillation does not merely delete parameters; it transfers the “dark knowledge” of the teacher’s decision boundaries. For a deeper look at how models reason through complex problems, see our guide on Chain‑of‑Thought prompting.

Quantization vs Distillation: A Side‑by‑Side Comparison

Although both model quantization and distillation aim to shrink models, they operate at different levels. Understanding their distinct characteristics helps you choose the right tool for the job.

- Mechanism: Quantization reduces numerical precision (e.g., FP32 → INT8). Distillation trains a new, smaller architecture.

- Impact on Architecture: Quantization preserves the original network layers but changes data types. Distillation creates an entirely different model topology.

- Training Requirements: Post‑training quantization requires no retraining; QAT requires fine‑tuning. Distillation requires a full training cycle with a teacher model.

- Accuracy Preservation: INT8 quantization typically loses ~1‑3% accuracy. Distillation can sometimes match or even slightly exceed the teacher’s accuracy if the student is well‑tuned.

- Hardware Acceleration: Quantized INT8 models see massive speedups on CPUs with SIMD instructions (NEON, AVX) and mobile NPUs. Distilled FP32 models are faster due to fewer operations but do not benefit from INT8‑specific hardware.

In practice, these techniques are synergistic. A common mobile deployment pipeline looks like this: First, distill a large teacher (e.g., BERT‑Large) into a compact student (e.g., a 6‑layer transformer). Second, apply quantization to the student, converting its weights to INT8. The result is a model that is both architecturally lean and numerically efficient, capable of running in real‑time on a budget smartphone.

Practical Implementation of Model Quantization and Distillation

Let’s move from theory to practice. Implementing model quantization and distillation is easier than ever thanks to mature open‑source libraries.

Quantization with TensorFlow Lite

To quantize a TensorFlow model, you can use the TFLiteConverter. For post‑training quantization, you simply provide a representative dataset to calibrate the activation ranges. The code looks like this:

converter = tf.lite.TFLiteConverter.from_saved_model(saved_model_dir)

converter.optimizations = [tf.lite.Optimize.DEFAULT]

converter.representative_dataset = representative_dataset

tflite_quant_model = converter.convert()

For QAT, TensorFlow provides the tensorflow_model_optimization toolkit. You wrap your model with quantization annotators before fine‑tuning.

Distillation with Hugging Face Transformers

The Hugging Face Transformers library includes a dedicated Trainer for distillation. You define a student model and a teacher model, then minimize a loss function that combines the standard cross‑entropy loss with a Kullback‑Leibler (KL) divergence loss against the teacher’s softened outputs. A temperature parameter controls the “softness” of the probability distribution. Higher temperatures make the distribution smoother, revealing more of the teacher’s dark knowledge.

Combining Both Techniques

After distilling a student model, you can further quantize it using the same TFLite or PyTorch Mobile tools. This two‑step process is widely adopted in production mobile applications, from Google’s on‑device keyboard suggestions to real‑time video filters in social media apps.

Challenges and Limitations

Despite their benefits, model quantization and distillation are not silver bullets. Several challenges can arise during deployment.

- Accuracy Degradation in Quantization: Very small models or those with unusual activation distributions (e.g., transformers with large outlier values) can suffer significant accuracy loss when quantized to INT8 or INT4. Techniques like smooth quantization or outlier channel splitting help mitigate this.

- Distillation Complexity: Training a student model requires a forward pass through the teacher for each batch, which can be computationally expensive. Moreover, finding the right student architecture and temperature hyperparameters often involves trial and error.

- Operator Coverage: Not all neural network operations are supported in quantized mode on all mobile accelerators. Custom ops or complex control flow may fall back to slower FP32 execution, negating some of the speed benefits.

- Calibration Data Dependency: PTQ requires a representative dataset to determine activation ranges. If this dataset is not truly representative of production data, the quantized model may perform poorly in the wild.

Furthermore, in the context of federated learning, quantization becomes even more critical. Transmitting full FP32 model updates from thousands of edge devices would consume prohibitive bandwidth. Quantized gradients or weights dramatically reduce communication costs, making federated training viable.

Real‑World Mobile Deployment Success Stories

The impact of model quantization and distillation is best illustrated through real‑world examples.

- Google Translate On‑Device Mode: Google uses quantization extensively to run neural machine translation models offline on Pixel phones. The models are compressed to under 50MB while maintaining near‑cloud quality.

- Facebook Messenger’s M Suggestions: Facebook distilled a massive recommendation model into a lightweight on‑device model using quantization. This allows real‑time contextual suggestions without sending private messages to servers.

- Snapchat’s Augmented Reality Lenses: Snapchat quantizes and distills computer vision models for face tracking and segmentation. These models run at 30+ FPS on mid‑range smartphones, enabling smooth AR experiences.

- Apple’s Core ML and Neural Engine: Apple’s hardware is optimized for INT8 and FP16 computation. Models converted via Core ML Tools automatically benefit from quantization, unlocking the full potential of the Neural Engine.

Future Trends: Extreme Quantization and Automated Distillation

The frontier of model quantization and distillation is pushing toward even more aggressive compression. Researchers are exploring INT4 and even binary/ternary networks, where weights are constrained to just a few discrete values (e.g., -1, 0, +1). While challenging to train, these models offer astonishing speedups on custom hardware. Similarly, automated distillation frameworks like AutoDistill use neural architecture search (NAS) to find the optimal student architecture for a given latency budget.

Another emerging trend is sparse quantization, which combines pruning (removing near‑zero weights) with quantization. This results in models that are both small and fast. For a broader perspective on how AI systems are orchestrated, explore our article on multi-agent systems, where on‑device agents could leverage these compressed models.

Best Practices for Mobile Model Optimization

To successfully deploy models on mobile devices using model quantization and distillation, adhere to these best practices:

- Start with Distillation, Then Quantize: Reduce the architectural complexity first. Quantizing a smaller model yields better accuracy than aggressively quantizing a large model.

- Validate with a Realistic Test Set: Always evaluate quantized models on a dataset that mirrors the target deployment environment (e.g., images taken from the phone’s camera, not clean stock photos).

- Profile on Target Hardware: Use tools like Android Studio Profiler or Xcode Instruments to measure actual inference time and memory usage on the device. Do not rely solely on theoretical FLOPs reductions.

- Use Hardware‑Accelerated Delegates: On Android, leverage the NNAPI or GPU Delegate for TFLite. On iOS, use Core ML. These delegates automatically take advantage of specialized silicon.

- Monitor for Accuracy Regressions: Set up a CI/CD pipeline that runs an accuracy benchmark whenever the model is updated. Quantization bugs can be subtle and should be caught early.

Conclusion: Small Models, Big Impact

In summary, model quantization and distillation are indispensable techniques for bringing state‑of‑the‑art AI to mobile and edge devices. Quantization delivers immediate size and speed improvements by reducing numerical precision, while distillation transfers the knowledge of massive models into compact, efficient architectures. When used together, they form a powerful pipeline that slashes latency, preserves privacy, and enables entirely new classes of on‑device experiences—from real‑time language translation to immersive augmented reality. As AI continues to permeate every aspect of our digital lives, mastering these compression techniques will be essential for any engineer looking to deploy intelligent applications at scale, right in the palm of your hand.

Further Reading: Deepen your understanding of AI optimization with our articles on Federated Learning, Serverless Architectures, and Chain‑of‑Thought Prompting. For official quantization guides, visit TensorFlow Lite Quantization and PyTorch Quantization.