Two Paths to Understanding Uncertainty

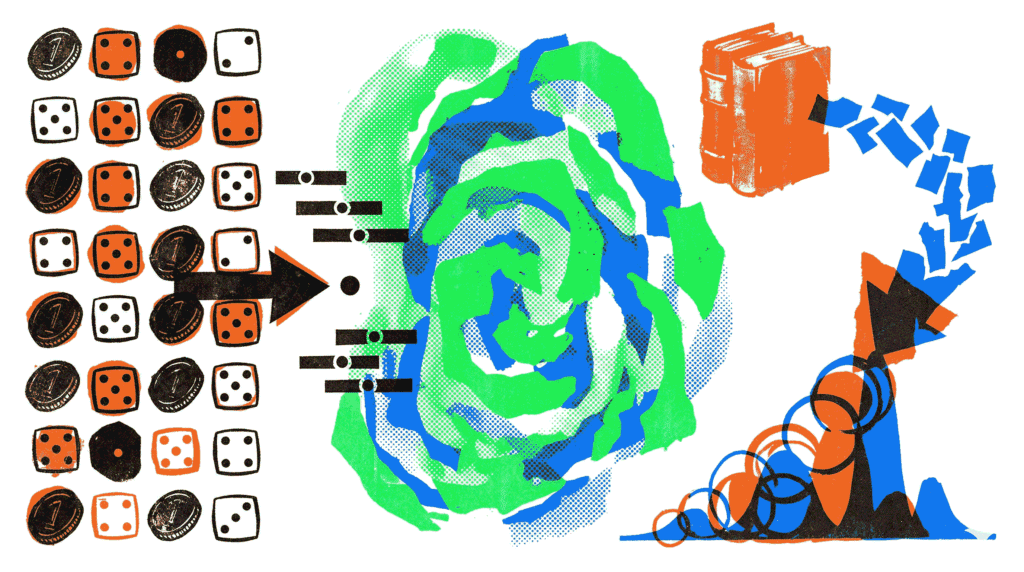

At the heart of data science and machine learning lies a fundamental question: how should we interpret probability and make inferences from data? For over two centuries, two dominant philosophical schools have provided competing answers. This is the essence of Bayesian vs Frequentist statistics. On one side, Frequentists define probability strictly as the long‑run frequency of events in repeated trials. On the other side, Bayesians treat probability as a degree of belief, which can be updated as new evidence emerges. Consequently, the choice between these frameworks profoundly influences how we design experiments, train AI models, and quantify uncertainty. In this article, we will unpack the core principles of Bayesian vs Frequentist statistics, compare their methodologies, and explore where each shines in practice.

The Frequentist Interpretation of Probability

The Frequentist approach is the bedrock of traditional statistical education and many scientific fields. In the Bayesian vs Frequentist statistics debate, Frequentism asserts that probability is an objective property of the physical world. Specifically, the probability of an event is defined as the limit of its relative frequency over a large number of independent, identical trials. For example, saying a fair coin has a 50% probability of heads means that if we could flip it infinitely many times, the proportion of heads would converge to 0.5.

This framework heavily relies on three core concepts:

- P‑values and Hypothesis Testing: Frequentists test a “null hypothesis” (e.g., “this new drug has no effect”). The p‑value represents the probability of observing data as extreme as, or more extreme than, the actual sample, assuming the null hypothesis is true. A small p‑value (typically < 0.05) is considered evidence against the null.

- Confidence Intervals: A 95% confidence interval does not mean there is a 95% chance the true parameter lies within the interval. Instead, it means that if we repeated the experiment many times, 95% of the intervals constructed this way would contain the true parameter.

- Maximum Likelihood Estimation (MLE): This method finds the parameter values that make the observed data most probable. It is objective and widely used but does not incorporate prior knowledge.

Frequentist methods are valued for their objectivity. Two analysts with the same data and model will arrive at the same p‑value. However, this approach struggles to answer the questions practitioners actually care about, such as “What is the probability that this hypothesis is true?” or “What is the probability that the true effect size lies within this range?”

The Bayesian Approach: Probability as Belief

In contrast, the Bayesian framework treats probability as a measure of uncertainty or degree of belief. This belief can be subjective and is explicitly allowed to change over time. The engine of Bayesian inference is Bayes’ Theorem, a mathematical formula that describes how to update the probability of a hypothesis (H) given new evidence (E).

Bayes’ Theorem is expressed as: P(H|E) = [ P(E|H) * P(H) ] / P(E). Let’s break this down:

- P(H) – The Prior: This is our initial belief about the hypothesis before seeing the data. It might come from historical data, domain expertise, or even a neutral “uninformative” distribution.

- P(E|H) – The Likelihood: This is the probability of observing the evidence assuming the hypothesis is true. This component is familiar to Frequentists.

- P(H|E) – The Posterior: This is our updated belief after seeing the evidence. It is the primary output of Bayesian analysis.

- P(E) – The Marginal Likelihood: A normalizing constant ensuring the posterior is a valid probability distribution.

A key strength of the Bayesian method in the Bayesian vs Frequentist statistics comparison is its intuitive output. A Bayesian credible interval directly answers the question: “Given the observed data, there is a 95% probability that the true parameter lies within this interval.” This aligns perfectly with how humans naturally interpret uncertainty. Moreover, Bayesians can incorporate prior knowledge, which is invaluable when data is scarce.

Key Differences Summarized

To clarify the Bayesian vs Frequentist statistics debate, let’s contrast their core philosophical and practical differences.

- Definition of Probability: Frequentist: Long‑run frequency. Bayesian: Degree of belief.

- Treatment of Parameters: Frequentist: Parameters are fixed, unknown constants. Bayesian: Parameters are random variables with distributions.

- Use of Prior Information: Frequentist: Excluded; only data from the current experiment is used. Bayesian: Explicitly incorporated via the prior distribution.

- Interpretation of Intervals: Frequentist (Confidence Interval): Long‑run coverage property. Bayesian (Credible Interval): Direct probabilistic statement about the parameter.

- Computational Complexity: Frequentist: Often simpler, closed‑form solutions (e.g., linear regression). Bayesian: Often requires complex computational methods like Markov Chain Monte Carlo (MCMC).

Practical Applications and When to Choose Which

The choice between Bayesian vs Frequentist statistics is rarely purely philosophical. Instead, it often depends on the specific problem, the availability of data, and the goals of the analysis.

When Frequentist Methods Excel

- Large, Controlled Experiments: In clinical trials or A/B testing with massive sample sizes, the objectivity and simplicity of Frequentist hypothesis testing are standard and often required by regulators like the FDA.

- Classical Machine Learning Models: Many foundational algorithms (e.g., Ordinary Least Squares regression, Support Vector Machines) are rooted in Frequentist principles and are computationally efficient.

- When Objectivity is Paramount: In fields like academic publishing or legal evidence, the reliance on a subjective prior can be controversial. Frequentism provides a seemingly neutral starting point.

When Bayesian Methods Shine

- Small or Noisy Data Regimes: When data is limited, priors act as a regularizer, preventing overfitting and allowing the model to make sensible predictions based on historical knowledge.

- Hierarchical Modeling: Problems with nested data structures—such as students within classrooms, within schools—are elegantly handled by Bayesian hierarchical models. They allow information to be shared (“borrowed”) across groups.

- Uncertainty Quantification (UQ): In high‑stakes AI applications like autonomous driving or medical diagnosis, knowing the probability of a prediction being correct is crucial. Bayesian methods naturally output full posterior distributions, offering rich uncertainty estimates.

- Probabilistic Programming: Modern tools like PyMC and Stan have made building complex Bayesian models accessible to a wide audience.

The Debate in Modern AI and Machine Learning

The Bayesian vs Frequentist statistics debate is not just academic; it permeates modern AI. For instance, training large language models (LLMs) uses Maximum Likelihood Estimation (a Frequentist tool). Yet, to make these models robust and avoid issues like Simpson’s paradox, we need proper uncertainty calibration. This is where Bayesian techniques like Monte Carlo Dropout or Deep Ensembles come into play.

Moreover, in reinforcement learning, the exploration‑exploitation trade‑off is inherently Bayesian. An agent must maintain a belief distribution over the value of different actions and update that belief as it gathers experience. Similarly, in multi-agent systems, agents operating with incomplete information about the world or other agents’ strategies benefit greatly from Bayesian reasoning.

Even the way we evaluate models shows this tension. Metrics like accuracy and RMSE are point estimates. However, for a more nuanced understanding, we often look at confidence intervals (Frequentist) or posterior predictive checks (Bayesian).

Criticisms and Limitations of Both Approaches

No framework is without its weaknesses. A balanced view of Bayesian vs Frequentist statistics requires acknowledging these drawbacks.

- Frequentist Criticisms: P‑values are notoriously misunderstood and often misused (“p‑hacking”). Moreover, the reliance on hypothetical long‑run repetitions feels unnatural for one‑off events (e.g., “What is the probability that this specific election result was fraudulent?”).

- Bayesian Criticisms: The choice of the prior distribution is a major point of contention. A poorly chosen prior can unduly influence the results, leading to accusations of subjectivity and bias. Furthermore, computing the posterior distribution can be computationally intensive, especially for high‑dimensional models.

Bridging the Divide: Empirical Bayes and Pragmatism

In practice, the line between Bayesian vs Frequentist statistics is often blurred. Many modern techniques borrow from both worlds. Empirical Bayes, for instance, uses the data itself to estimate the prior distribution, reducing the subjectivity of prior selection. Likewise, regularization techniques like Lasso and Ridge regression (which are cornerstones of machine learning) have both Frequentist justifications (penalized likelihood) and Bayesian interpretations (placing Laplace or Gaussian priors on coefficients).

Ultimately, the most effective data scientists adopt a pragmatic stance. They wield Frequentist methods for quick, objective exploratory analysis. Then, they turn to Bayesian methods when they need to incorporate domain expertise or provide rich, interpretable uncertainty quantification. This hybrid approach leverages the strengths of both paradigms while mitigating their individual shortcomings.

Conclusion: A False Dichotomy?

In summary, the Bayesian vs Frequentist statistics debate is not about finding a single “correct” answer. Instead, it represents two powerful lenses through which we can view uncertainty and extract meaning from data. Frequentism provides a rigorous, objective framework for controlled experimentation and large‑sample inference. Bayesianism, on the other hand, offers an intuitive, flexible language for updating beliefs and quantifying uncertainty, especially when data is limited or prior knowledge is rich. As AI systems become more complex and are deployed in increasingly uncertain environments, understanding both perspectives is essential. The modern practitioner is best served not by taking sides, but by building a versatile toolkit that harnesses the unique strengths of each.

Further Reading: Deepen your statistical understanding with our articles on Simpson’s Paradox in AI Training Data and Federated Learning. For hands‑on Bayesian modeling, explore the documentation for PyMC and Stan.